collection of resources to reflect upon the implications of deploying Machine Learning and Artificial Intelligence in socio-technical systems

tl;dr:

- totally unbiased machine learning is impossible, 'fairness' risks often to become just another business buzzword

- think hard about the politics and the social implications of ML/AI applications

- in some cases the ethical choice means to not deploy ML/AI at all

Datafication of Science

lecture by Kate Crawford (Distinguished AI Scholar) and Trevor Paglen (artist) + following discussion

https://peertube.fr/videos/watch/630ac6bd-c14b-4fe8-9671-64bbaff6e57d

Are Artificial Intelligence and Machine Learning really the right metaphors to address training sets that feed into automated processes? Kate Craword and Trevor Paglen look into the production of training data and uncover the historical origins, labor practices, infrastructures, and epistemological assumptions, with biases and skews built into them from the outset. Departing from these observations they ask: Is there a possibility for decentralized, vernacular AI? How does computational centralization relate to reduction?

extracted from the recording of the event 'Stop Making Sense' @ HKW 12 Jan 2019

https://www.hkw.de/en/programm/projekte/veranstaltung/p_146043.php

AI TRAPS: Automating Discrimination

Day 1 https://www.youtube.com/watch?v=ZzAdo5drZmA

Day 2 https://www.youtube.com/watch?v=7SqHtroA8U8

Day 1:

- THE TRACKED & THE INVISIBLE: From Biometric Surveillance to Diversity in Data Science

Adam Harvey, Sophie Searcy, moderated by Adriana Groh - AI FOR THE PEOPLE: AI Bias, Ethics & The Common Good

Maya Indira Ganesh, Slava Jankin, moderated by Nicole Shephard - WHAT IS A FEMINIST AI? Possible Feminisms, Possible Internets

Charlotte Webb, moderated by Lieke Ploeger

Day 2:

- HOW IS GOVERNMENT USING BIG DATA?

Crofton Black, moderated by Daniel Eriksson - RACIAL DISCRIMINATION IN THE AGE OF AI: The Future of Civil Rights in the United States

Mutale Nkonde, moderated by Rhianna Ilube - ON THE POLITICS OF AI: Fighting Injustice & Automatic Supremacism

Dia Kayyali, Os Keyes, Dan McQuillan, moderated by Tatiana Bazzichelli

Discriminating Systems: Gender, Race and Power in AI

S.M. West, M. Whittaker, K. Crawford, AI Now Institute (2019)

https://ainowinstitute.org/discriminatingsystems.pdf

Machine Learning Is a Co-opting Machine

Solon Barocas (2019)

https://www.publicbooks.org/machine-learning-is-a-co-opting-machine/

Letting Go of Technochauvinism

Meredith Broussard (2019)

https://www.publicbooks.org/letting-go-of-technochauvinism/

AI Now 2018 Report

M. Whittaker et al., NYU, (2018)

https://ainowinstitute.org/AI_Now_2018_Report.pdf

After a Year of Tech Scandals, Our 10 Recommendations for AI

AI Now Institute, 6 Dec 2018

https://medium.com/@AINowInstitute/after-a-year-of-tech-scandals-our-10-recommendations-for-ai-95b3b2c5e5

Artificial intelligence and privacy (Kunstig intelligens og personvern)

Datatilsynet report, 11 Jan 2018

Most applications of artificial intelligence (AI) require huge volumes of data in order to learn and make intelligent decisions. Artificial intelligence is high on the agenda in most sectors due to its potential for radically improved services, commercial break-throughs and financial gains. This report aims to describe and help us understand how our privacy is affected by the development and application of artificial intelligence.

https://www.datatilsynet.no/en/about-privacy/reports-on-specific-subjects/ai-and-privacy/

Anatomy of an AI System

The Amazon Echo as an anatomical map of human labor, data and planetary resources

by Kate Crawford and Vladan Joler, Sep 2018

https://anatomyof.ai/

Towards an antifascist AI

Dan McQuillan

http://danmcquillan.io/ai_and_antifascism.html

Where fairness fails: data, algorithms, and the limits of antidiscrimination discourse

Anna Lauren Hoffmann, Information, Communication & Society, 22:7, 900-915, 2019

https://doi.org/10.1080/1369118X.2019.1573912

People’s Councils for Ethical Machine Learning

Dan McQuillan, Social Media + Society, 2018

https://doi.org/10.1177/2056305118768303

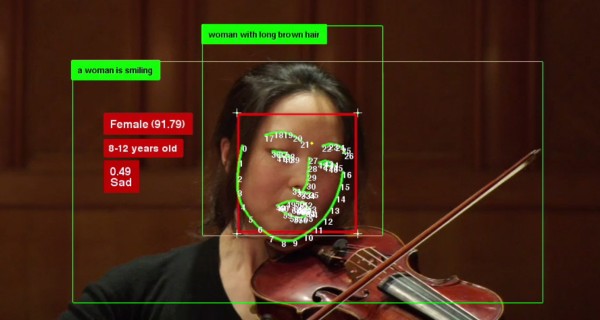

Facial recognition is the plutonium of AI

by Luke Stark, XRDS: Crossroads, The ACM Magazine for Students archive, Volume 25 Issue 3, Spring 2019, Pages 50-55

https://doi.org/10.1145/3313129

Potemkin AI

Many instances of “artificial intelligence” are artificial displays of its power and potential

by Jathan Sadowski, 6 Aug 2018

http://reallifemag.com/potemkin-ai/

Big Data Analytics and Human Rights

by Mark Latonero

https://doi.org/10.1017/9781316838952.007

Governing Artificial Intelligence

Upholding Human Rights & Dignity

Mark Latonero, Data & Society, 10 Oct 2018

https://datasociety.net/output/governing-artificial-intelligence/

Algorithmic Accountability: A Primer

Data & Society report by Robyn Caplan, Joan Donovan, Lauren Hanson, and Jeanna Matthews, Apr 18 2018

https://datasociety.net/output/algorithmic-accountability-a-primer/

Critical Perspectives on Artificial Intelligence and Human Rights

by Melanie Penagos, June 20 2018

https://points.datasociety.net/critical-perspectives-on-artificial-intelligence-and-human-rights-971bfc02d3c

The Seductive Diversion of ‘Solving’ Bias in Artificial Intelligence

Trying to “fix” A.I. distracts from the more urgent questions about the technology

Julia Powles, 7 Dec 2018

https://medium.com/s/story/the-seductive-diversion-of-solving-bias-in-artificial-intelligence-890df5e5ef53

AI on the Ground

Initiative at Data & Society (2019)

https://datasociety.net/research/ai-on-the-ground/

AI in Context - The Labor of Integrating New Technologies

Alexandra Mateescu, Madeleine Clare Elish, Data & Society Report, 30 Jan 2019

https://datasociety.net/output/ai-in-context/

Awful AI

Awful AI is a curated list to track current scary usages of AI - hoping to raise awareness to its misuses in society

https://github.com/daviddao/awful-ai/blob/master/README.md

Odd Numbers

Algorithms alone can’t meaningfully hold other algorithms accountable

by Frank Pasquale, 20 August 2018

http://reallifemag.com/odd-numbers/

Myth and the Making of AI

by Molly McCue and Kat Holmes, 16 Jul 2018

https://jods.mitpress.mit.edu/pub/holmes-mccue

Facial Recognition Is the Perfect Tool for Oppression

With such a grave threat to privacy and civil liberties, measured regulation should be abandoned in favor of an outright ban

by Woodrow Hartzog, 2 Aug 2018

https://medium.com/s/story/facial-recognition-is-the-perfect-tool-for-oppression-bc2a08f0fe66

Friction-Free Racism

Surveillance capitalism turns a profit by making people more comfortable with discrimination

Chris Gilliard, 15 Oct 2018

http://reallifemag.com/friction-free-racism/

Controlled Measures

Phrenology lies at the heart of biometric governance

by R. Joshua Scannell, 17 Sep 2018

http://reallifemag.com/controlled-measures/

Big Brother’s Blind Spot

"Mining the failures of surveillance tech"

by Joanne McNeil, The Baffler no.40, July 2018

https://thebaffler.com/salvos/big-brothers-blind-spot-mcneil

The Dangers of Facial Analysis

By Joy Buolamwini, June 21 2018

https://www.nytimes.com/2018/06/21/opinion/facial-analysis-technology-bias.html

Design Justice, A.I., and Escape from the Matrix of Domination

by Sasha Costanza-Chock, updated 18 Jul 2018

https://jods.mitpress.mit.edu/pub/costanza-chock

AI in the Doctor-Patient Relationship

"Identifying Some Legal Questions We Should Be Asking"

by Claudia Haupt, June 19 2018

https://points.datasociety.net/ai-in-the-doctor-patient-relationship-1b44dd1b24c8

Engine Failure

Safiya Umoja Noble and Sarah T. Roberts on the Problems of Platform Capitalism, Logic Issue 3 "Injustice"

https://logicmag.io/03-engine-failure/

Machine bias: There’s software used across the country to predict future criminals. and it’s biased against blacks

by Julia Angwin, Jeff Larson, Surya Mattu and Lauren Kirchner, ProPublica, 23 May 2016

https://www.propublica.org/article/machine-bias-risk-assessments-in-criminal-sentencing

Artificial Intelligence’s White Guy Problem

Kate Crawford, The New York Times (2016)

https://www.nytimes.com/2016/06/26/opinion/sunday/artificial-intelligences-white-guy-problem.html

Critical Questions For Big Data - Provocations for a cultural, technological, and scholarly phenomenon

danah boyd & Kate Crawford, Information, Communication & Society, 15:5, 662-679, 2012

https://doi.org/10.1080/1369118X.2012.678878

Machine Learning Harassment

Caroline Sinders

https://xyz.informationactivism.org/en/machine-learning-harassment

Weapons of Math Destruction - How Big Data Increases Inequality and Threatens Democracy

by Cathy O'Neil (2016)

https://www.penguinrandomhouse.com/books/241363/weapons-of-math-destruction-by-cathy-oneil/

Algorithms of Oppression - How Search Engines Reinforce Racism

by Safiya Umoja Noble (2018)

https://nyupress.org/books/9781479837243/

Automating Inequality - How High-Tech Tools Profile, Police, and Punish the Poor

by Virginia Eubanks (2018)

https://us.macmillan.com/books/9781250074317

Artificial Unintelligence - How Computers Misunderstand the World

by Meredith Broussard (2018)

https://mitpress.mit.edu/books/artificial-unintelligence

The AI Delusion

by Gary Smith (2018)

https://global.oup.com/academic/product/the-ai-delusion-9780198824305?cc=no&lang=en&

-

Systemic Algorithmic Harms

Theories of “bias” alone will not enable us to engage in critiques of broader socio-technical systems.

Kinjal Dave , 31 May 2019 https://points.datasociety.net/systemic-algorithmic-harms-e00f99e72c42 -

The problem with AI ethics

Is Big Tech’s embrace of AI ethics boards actually helping anyone?

James Vincent, 3 Apr 2019 https://www.theverge.com/2019/4/3/18293410/ai-artificial-intelligence-ethics-boards-charters-problem-big-tech -

Analyzing & Preventing Unconscious Bias in Machine Learning

https://www.infoq.com/presentations/unconscious-bias-machine-learning -

A.I. Could Worsen Health Disparities In a health system riddled with inequity, we risk making dangerous biases automated and invisible.

Dhruv Khullar, 31 Jan 2019

https://www.nytimes.com/2019/01/31/opinion/ai-bias-healthcare.html -

Automating Society – Taking Stock of Automated Decision-Making in the EU

A report by AlgorithmWatch in cooperation with Bertelsmann Stiftung, supported by the Open Society Foundations

https://algorithmwatch.org/en/automating-society/ -

The Great White Robot God - Artificial General Intelligence and White Supremacy

by David Golumbia

https://medium.com/@davidgolumbia/the-great-white-robot-god-bea8e23943da -

Five theses on technoliberalism and the networked public sphere

by Damien Smith Pfister and Misti Yang, Sep 2018

https://doi.org/10.1177%2F2057047318794963 -

The Environment is Not a System

by Tega Brain, July 2018

http://www.aprja.net/the-environment-is-not-a-system/ -

How Companies Use Personal Data Against People

Automated disadvantage and personalized manipulation? A working paper on the societal ramifications of the commercial use of personal information, with a focus on automated decision-making, personalization, and data-driven behavioral change.

working Paper by Wolfie Christl, Cracked Labs, October 2017

http://crackedlabs.org/en/data-against-people -

Corporate Surveillance in Everyday Life

Report: How thousands of companies monitor, analyze, and influence the lives of billions. Who are the main players in today’s digital tracking? What can they infer from our purchases, phone calls, web searches, and Facebook likes? How do online platforms, tech companies, and data brokers collect, trade, and make use of personal data?

by Wolfie Christl, Cracked Labs, June 2017

http://crackedlabs.org/en/corporate-surveillance -

Networks of Control

A Report on Corporate Surveillance, Digital Tracking, Big Data & Privacy

by Wolfie Christl and Sarah Spiekermann, 2016

http://crackedlabs.org/en/networksofcontrol -

Glitch capitalism: How cheating AIs explain our glitchy society

by Malcom Harris, 23 April 2018

https://nymag.com/selectall/2018/04/malcolm-harris-on-glitch-capitalism-and-ai-logic.html -

Ungoverned Space: How Surveillance Capitalism and AI Undermine Democracy

by Taylor Owen, March 20 2018

https://www.cigionline.org/articles/ungoverned-space -

The Automation Charade" by Astra Taylor

Finding the human in the machine

https://logicmag.io/05-the-automation-charade/ -

Selling Smartness

"Corporate Narratives and the Smart City as a Sociotechnical Imaginary"

Jathan Sadowski and Roy Bendor, 16 Oct 2018

http://journals.sagepub.com/doi/full/10.1177/0162243918806061 -

Stop Saying 'Smart Cities'

"Digital stardust won’t magically make future cities more affordable or resilient."

by Bruce Sterling, Feb 12 2018

https://www.theatlantic.com/technology/archive/2018/02/stupid-cities/553052/ -

The Data Is Ours!

"What is big data? And how do we democratize it?"

by Ben Tarnoff, Logic Issue 4 "Scale"

https://logicmag.io/04-the-data-is-ours/ -

Weaponised AI is coming. Are algorithmic forever wars our future?

"The US military is creating a more automated form of warfare – one that will greatly increase its capacity to wage war everywhere forever."

by Ben Tarnoff, 11 Oct 2018

https://www.theguardian.com/commentisfree/2018/oct/11/war-jedi-algorithmic-warfare-us-military -

Machine learned cruelty and border control

by Flavia Dzodan, June 19 2018

https://artificeofintelligence.org/machine-learned-cruelty-and-border-control/ -

Personal Panopticons

"A key product of ubiquitous surveillance is people who are comfortable with it"

by L. M. Sacasas, 5 Nov 2018

https://reallifemag.com/personal-panopticons/ -

Big Other: Surveillance Capitalism and the Prospects of an Information Civilization.

by Shoshanna Zuboff, Journal of Information Technology 30 (2015): 75–89

https://ssrn.com/abstract=2594754 -

The challenges of platform capitalism: Understanding the logic of a new business model

by Nick Srnicek, Juncture 23(4), March 2017

https://doi.org/10.1111/newe.12023 -

Deceived By Design

How tech companies use dark patterns to discourage us from exercising our rights to privacy

Forbrukerrådet, June 27 2018

https://fil.forbrukerradet.no/wp-content/uploads/2018/06/2018-06-27-deceived-by-design-final.pdf -

Weaponizing the Digital Influence Machine

"The Political Perils of Online Ad Tech"

by Anthony Nadler, Matthew Crain, and Joan Donovan, Data & Society, 17 Oct 2018

https://datasociety.net/output/weaponizing-the-digital-influence-machine/ -

The Darkness at the End of the Tunnel: Artificial Intelligence and Neoreaction Shuja Haider, Viewpoint (2017)

https://www.viewpointmag.com/2017/03/28/the-darkness-at-the-end-of-the-tunnel-artificial-intelligence-and-neoreaction/ -

Overpowered Metrics Eat Underspecified Goals

by David Manheim, September 29 2016

https://www.ribbonfarm.com/2016/09/29/soft-bias-of-underspecified-goals/ -

Google and Microsoft have made a pact to protect surveillance capitalism

"Two bitter rivals have agreed to drop mutual antitrust cases across the globe. Why? To fend off the greater regulatory threat of democratic oversight"

by Julia Powles, 2 May 2016

https://www.theguardian.com/technology/2016/may/02/google-microsoft-pact-antitrust-surveillance-capitalism -

The Moral Economy of Tech

by Maciej Cegłowski, June 2016

http://idlewords.com/talks/sase_panel.htm -

How big data is unfair?

by Moritz Hardt, 26 September 2014

https://medium.com/@mrtz/how-big-data-is-unfair-9aa544d739de -

The New Octopus

"Technology has spawned new corporate giants. Their control of society’s digital infrastructure threatens our democracy. What should we do about it?"

by K. Sabeel Rahman, Logic Issue 4 "Scale"

https://logicmag.io/04-the-new-octopus/

The Color of Algorithms: An Analysis and Proposed Research Agenda for Deterring Algorithmic Redlining

James A. Allen, 46 Fordham Urb. L.J. 219 (2019)

https://ir.lawnet.fordham.edu/ulj/vol46/iss2/1

Bias detectives: the researchers striving to make algorithms fair

As machine learning infiltrates society, scientists are trying to help ward off injustice.

by Rachel Courtland, June 20 2018

https://www.nature.com/articles/d41586-018-05469-3

Fairness and Abstraction in Sociotechnical Systems

Andrew D. Selbst, danah boyd, Sorelle Friedler, Suresh Venkatasubramanian, Janet Vertesi, ACM Conference on Fairness, Accountability, and Transparency (FAT*), Vol. 1, No. 1

https://ssrn.com/abstract=3265913

Situating Methods in the Magic of Big Data and Artificial Intelligence

M. C. Elish and danah boyd, Sep 2017, Communication Monographs

https://ssrn.com/abstract=3040201

An AI Race for Strategic Advantage: Rhetoric and Risks

Stephen Cave, Seán S ÓhÉigeartaigh

http://www.aies-conference.com/wp-content/papers/main/AIES_2018_paper_163.pdf

Big Data and discrimination: perils, promises and solutions. A systematic review

Maddalena Favaretto, Eva De Clercq and Bernice Simone Elger, Journal of Big Data 2019 6:12

https://doi.org/10.1186/s40537-019-0177-4

Dirty Data, Bad Predictions: How Civil Rights Violations Impact Police Data, Predictive Policing Systems, and Justice

Rashida Richardson, Jason Schultz, and Kate Crawford, 13 February 2019, New York University Law Review Online

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3333423

Ten simple rules for responsible big data research

Zook M, Barocas S, boyd d, Crawford K, Keller E, Gangadharan SP, et al., PLoS Comput Biol 13(3): e1005399 (2017) https://doi.org/10.1371/journal.pcbi.1005399

Transparency and Explanation in Deep Reinforcement Learning Neural Networks

Rahul Iyer, Yuezhang Li, Huao Li, Michael Lewis, Ramitha Sundar, Katia Sycara

https://arxiv.org/abs/1809.06061

50 Years of Test (Un)fairness: Lessons for Machine Learning

Ben Hutchinson, Margaret Mitchell

https://arxiv.org/abs/1811.10104

Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification

Joy Buolamwini, Timnit Gebru, Proceedings of the 1st Conference on Fairness, Accountability and Transparency, PMLR 81:77-91, 2018.

http://proceedings.mlr.press/v81/buolamwini18a.html

Fairer Machine Learning in the Real World: Mitigating Discrimination Without Collecting Sensitive Data

Michael Veale and Reuben Binns, Big Data & Society 4(2), 2017

https://journals.sagepub.com/doi/10.1177/2053951717743530

Blind Justice: Fairness with Encrypted Sensitive Attributes

Niki Kilbertus, Adrià Gascón, Matt J. Kusner, Michael Veale, Krishna P. Gummadi, Adrian Weller

Recent work has explored how to train machine learning models which do not discriminate against any subgroup of the population as determined by sensitive attributes such as gender or race. To avoid disparate treatment, sensitive attributes should not be considered. On the other hand, in order to avoid disparate impact, sensitive attributes must be examined, e.g., in order to learn a fair model, or to check if a given model is fair. We introduce methods from secure multi-party computation which allow us to avoid both. By encrypting sensitive attributes, we show how an outcome-based fair model may be learned, checked, or have its outputs verified and held to account, without users revealing their sensitive attributes.

https://arxiv.org/abs/1806.03281

POTs: Protective Optimization Technologies

by Seda Gürses , Rebekah Overdorf, Ero Balsa

In spite of their many advantages, optimization systems often neglect the economic, ethical, moral, social, and political impact they have on populations and their environments. In this paper we argue that the frameworks through which the discontents of optimization systems have been approached so far cover a narrow subset of these problems by (i) assuming that the system provider has the incentives and means to mitigate the imbalances optimization causes, (ii) disregarding problems that go beyond discrimination due to disparate treatment or impact in algorithmic decision making, and (iii) developing solutions focused on removing algorithmic biases related to discrimination.

In response we introduce Protective Optimization Technologies: solutions that enable optimization subjects to defend from unwanted consequences. We provide a framework that formalizes the design space of POTs and show how it differs from other design paradigms in the literature. We show how the framework can capture strategies developed in the wild against real optimization systems, and how it can be used to design, implement, and evaluate a POT that enables individuals and collectives to protect themselves from unbalances in a credit scoring application related to loan allocation.

https://arxiv.org/abs/1806.02711

Problem Formulation and Fairness

Samir Passi and Solon Barocas, FAT* '19 Proceedings of the Conference on Fairness, Accountability, and Transparency

Pages 39-48

https://doi.org/10.1145/3287560.3287567

Tutorial: 21 fairness definitions and their politics

Arvind Narayanan

https://www.youtube.com/watch?v=jIXIuYdnyyk

Discrimination through optimization: How Facebook's ad delivery can lead to skewed outcomes

Muhammad Ali, Piotr Sapiezynski, Miranda Bogen, Aleksandra Korolova, Alan Mislove, Aaron Rieke

https://arxiv.org/abs/1904.02095

Toward ethical, transparent and fair AI/ML: a critical reading list

by Eirini Malliaraki, Feb 19 2018

https://medium.com/@eirinimalliaraki/toward-ethical-transparent-and-fair-ai-ml-a-critical-reading-list-d950e70a70ea

Critical Algorithm Studies: a Reading List

by Tarleton Gillespie and Nick Seaver

https://socialmediacollective.org/reading-lists/critical-algorithm-studies/#1.1

Governing Algorithms Reading List

https://governingalgorithms.org/resources/reading-list/

Algorithmic Studies: A (Brief) Critical Survey

By Jamie Macdonald, 31 July 2015

https://algorithmicstudies.uchri.org/literature-survey/

Auditing Algorithms From the Outside: Methods and Implications

Background Readings

https://auditingalgorithms.wordpress.com/background-readings/

algoaware.eu bibliography

https://www.algoaware.eu/bibliography/