According to Donovan Brown, "DevOps is the union of people, process, and products to enable continuous delivery of value to our end users.

The idea is to create multidisciplinary teams that work together with shared and efficient practices and tools.

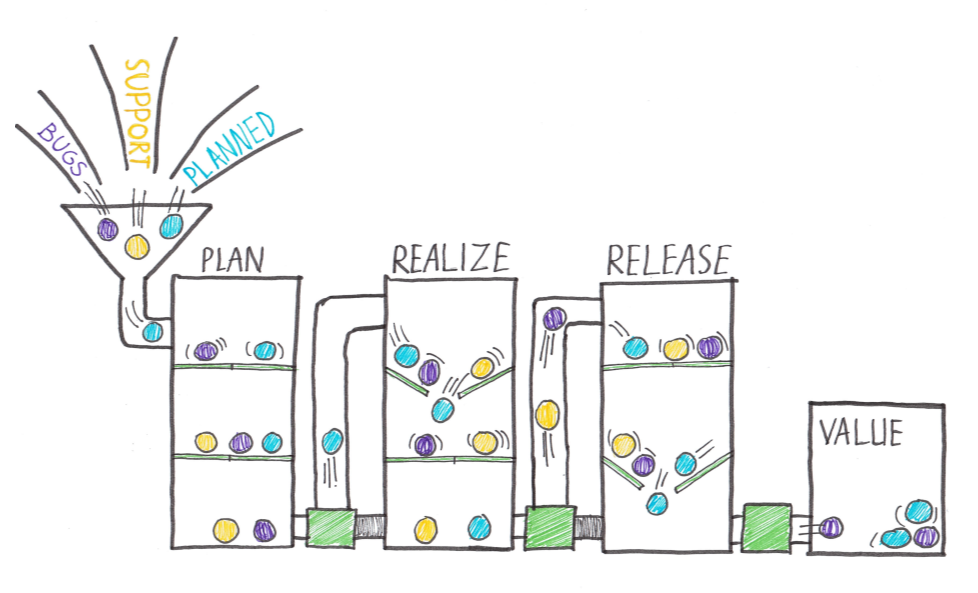

Essential DevOps practices include agile planning, continuous integration, continuous delivery, and monitoring of applications.

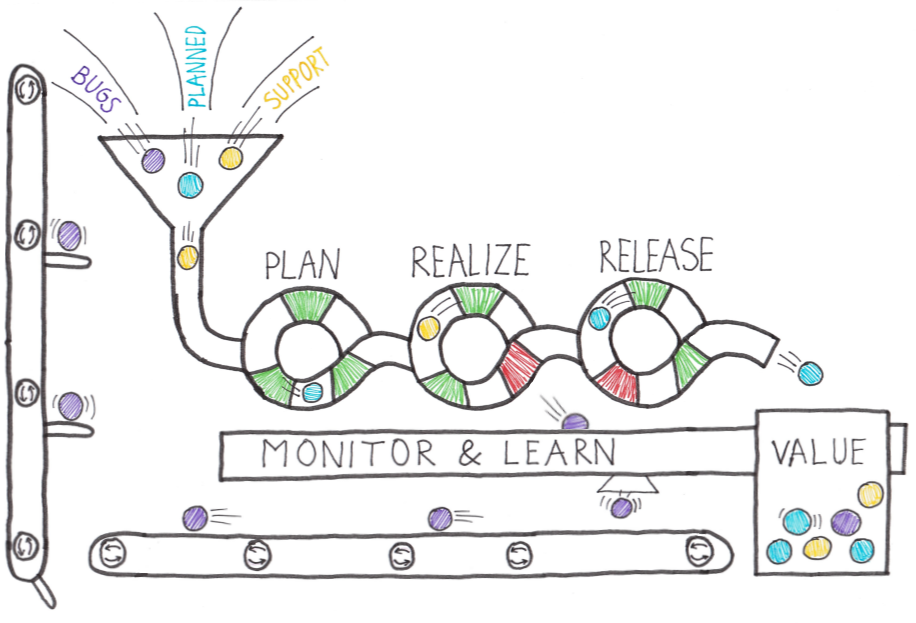

- Observing business, market, needs, current user behavior, and available telemetry data.

- Orient with the enumeration of options for what you can deliver, perhaps with experiments.

- Decide what to pursue

- Act by delivering working software to real users

Fail fast on effects that do not advance the business and double down on outcomes that support the business. Sometimes the approach is called pivot or persevere.

Your cycle time determines how quickly you can gather feedback to determine what happens in the next loop. The feedback that you collect with each cycle should be factual, actionable data.

When you adopt DevOps practices:

- You shorten your cycle time by working in smaller batches.

- Using more automation.

- Hardening your release pipeline.

- Improving your telemetry.

- Deploying more frequently.

- Optimize validated learning The more frequently you deploy, the more you can experiment.

The more opportunity you have to pivot or persevere and gain validated learning each cycle.

This acceleration in validated learning is the value of the improvement.

Think of it as the sum of progress that you achieve and the failures that you avoid.

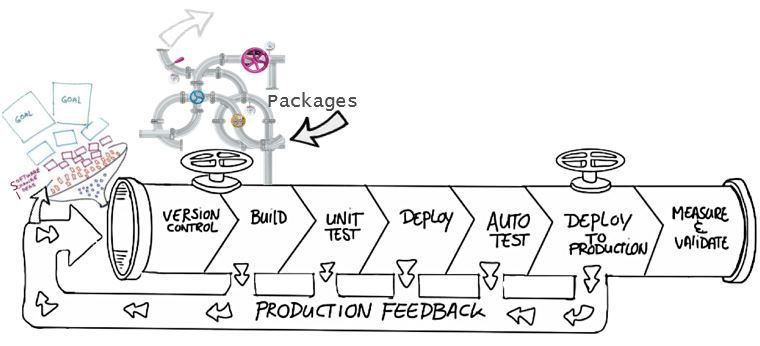

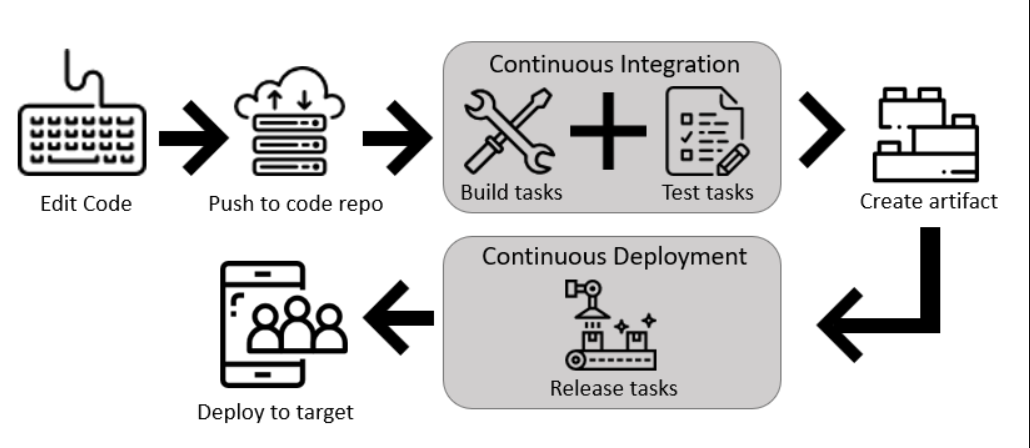

Continuous Integration drives the ongoing merging and testing of code, leading to an early finding of defects.

Other benefits include:

- less time wasted fighting merge issues

- rapid feedback for development teams.

Continuous Delivery of software solutions to production and testing environments helps organizations:

- quickly fix bugs and

- respond to ever-changing business requirements.

Version Control, usually with a Git-based Repository, enables teams worldwide to communicate effectively during daily development activities. Integrate with software development tools for monitoring activities such as deployments.

Use Agile planning and lean project management techniques to:

- Plan and isolate work into sprints.

- Manage team capacity and help teams quickly adapt to changing business needs.

A DevOps Definition of Done is working software collecting telemetry against the intended business goals.

Monitoring and Logging of running applications.

Including production environments for:

- application health and

- customer usage.

It helps organizations create a hypothesis and quickly validate or disprove strategies. Rich data is captured and stored in various logging formats.

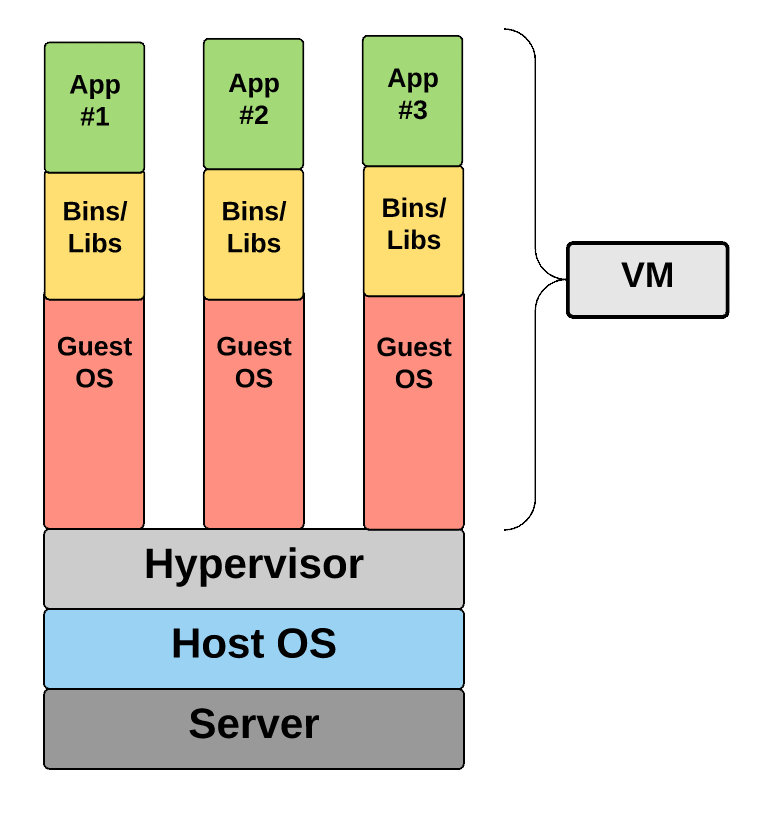

Public and Hybrid Clouds have made the impossible easy.

Use Infrastructure as a Service (IaaS) to lift and shift your existing apps or Platform as a Service (PaaS) to gain unprecedented productivity. The cloud gives you a data center without limits.

Infrastructure as Code (IaC): Enables the automation and validation of the creation and teardown of environments to help deliver secure and stable application hosting platforms.

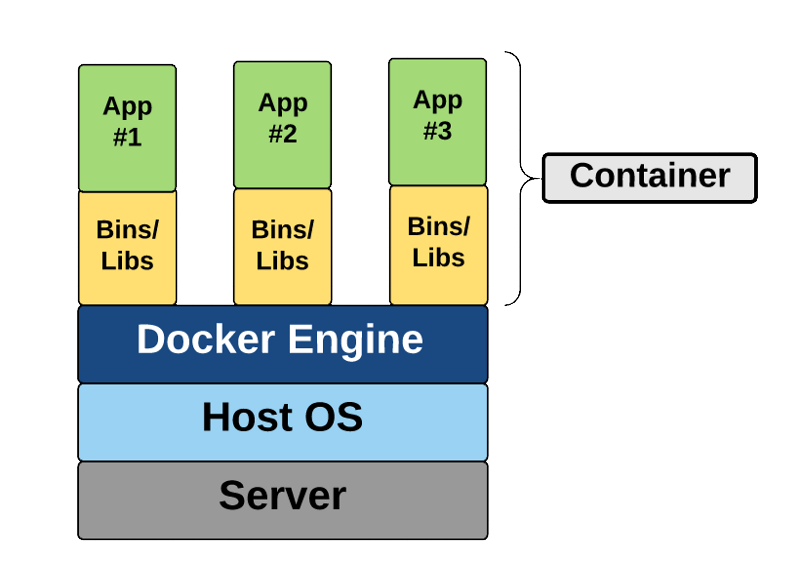

Use Microservices architecture to isolate business use cases into small reusable services that communicate via interface contracts. This architecture enables:

- scalability and

- efficiency.

"Beyond the Idea: How to Execute Innovation,"

Measurable goals also need to have timelines. Every few weeks, the improvements should be clear and measurable. Ideally, evident to the organization or its customers.

- It is easier to change plans or priorities when necessary.

- The reduced delay between doing work and getting feedback helps ensure that the learnings and feedback are incorporated quickly.

- It is easier to keep organizational support when positive outcomes are clear.

This module helps organizations decide the projects to start applying the DevOps process and tools to minimize initial resistance.

A greenfield project is one done on a green field, undeveloped land. It can seem that a greenfield DevOps project would be easier to manage and to achieve success because: There was no existing codebase. No existing team dynamics of politics. Possibly no current, rigid processes. A blank slate offers the chance to implement everything the way that you want. better chance of avoiding existing business processes that do not align with your project plans. Suppose current IT policies do not allow the use of cloud-based infrastructure. In that case, the project might be qualified for entirely new applications designed for that environment from scratch. you can sidestep internal political issues that are well entrenched.

A brownfield project is done on the used ground for other purposes. Because of the land use that has once occurred, there could be challenges reusing the land. Like existing buildings, some would be obvious but less obvious, like polluted soil.

Usually, brownfield projects come with: The baggage of existing codebases. Existing teams. A significant amount of technical debt.

The beauty of these projects is that: there's often a large gap between customer expectations and delivery. The teams involved may well realize that the status quo needs to change. They've lived the challenges and the limitations associated with what they're currently doing. The limitations will have often worn down the existing team members. For example, they are working in the past and are keen to experiment with new ideas. The system is often crucial for organizations. It might also be easier to gain more robust management buy-in for these projects because of the potential benefits delivered.

Decide when to use systems of record versus systems of engagement When selecting systems as candidates for starting a DevOps transformation, it is necessary to consider the types of systems that you operate.

Some researchers suggest that organizations often use Bimodal IT, a practice of managing two separate, coherent modes of IT delivery - one focused on stability and predictability and the other on agility.

- they provide the truth about data elements

- have historically evolved slowly and carefully

- e.g. it is crucial that a banking system accurately reflects your bank balance

- they emphasize accuracy and security.

Many organizations have other systems that are more exploratory. often use experimentation to solve new problems. are modified regularly it is a priority to make quick changes over ensuring that the changes are correct.

There is a perception that DevOps suits systems of engagement more than systems of record. The lessons from high-performing companies show that is not the case.

Both types of systems are great. It might be easier to start with a system of engagement when first beginning a DevOps Transformation. DevOps practices apply to both types of systems. The most significant outcomes often come from transforming systems of record.

In discussions around continuous delivery, we usually categorize users into three general buckets:

- Canary users voluntarily test bleeding edge features as soon as they're available. Keen to see new features as soon as they're available and highly tolerant of issues

- Early adopters who voluntarily preview releases, considered more refined than the code that exposes canary users. Similar characteristics to the Canaries. Often have work requirements that make them less tolerant of issues and interruptions to work.

- Users who consume the products after passing through canary and early adopters.

Ideal target improvements Roll out changes incrementally. When starting, it is essential to find an improvement goal that: It can be used to gain early wins. It is small enough to be achievable in a reasonable timeframe. Has benefits that are significant enough to be evident to the organization. It allows constant learning from:

- rapid feedback and

- recovering from mistakes quickly.

The aim is to build a snowball effect where each new successful outcome adds to previous successful results.

Goals needed to be:

- specific,

- measurable, and

- time-bound. Establish appropriate metrics and Key Performance Indicators (KPIs) to ensure these goals are measurable.

Metrics and KPIs that are commonly used in DevOps Projects:

- Faster outcomes

- Deployment Frequency '' Speed

- Deployment Size: How many features, stories, and bug fixes are being deployed each time?

- Lead Time: How long does it take from the creation of a work item until it is completed?

- Efficiency

- Server to Admin Ratio. Are the projects reducing the number of administrators required for a given number of servers?

- Staff Member to Customers Ratio. Is it possible for fewer staff members to serve a given number of customers?

- Application Usage. How busy is the application?

- Application Performance. Is the application performance improving or dropping? (Based upon application metrics)?

- Quality and security

- Deployment failure rates. How often do deployments (or applications) fail?

- Application failure rates. How often do application failures occur, such as configuration failures, performance timeouts, and so on?

- Mean time to recover. How quickly can you recover from a failure?

- Bug report rates. You do not want customers finding bugs in your code. Is the amount they are seeing increasing or lowering?

- Test pass rates. How well is your automated testing working?

- Defect escape rate. What percentage of defects are being found in production?

- Availability. What percentage of time is the application truly available for customers?

- Service level agreement achievement. Are you meeting your service level agreements (SLAs)?

- Mean time to detection. If there is a failure, how long does it take for it to be detected?

- Culture

- Employee morale. Are employees happy with the transformation and where the organization is heading? Are they still willing to respond to further changes? This metric can be challenging to measure but is often done by periodic, anonymous employee surveys.

- Retention rates. Is the organization losing staff?

It is crucial to choose metrics that focus on :

- specific business outcomes and

- achieve a return on investment and increased business value.

This module explores agile development practices and helps to define and to configure teams and tools for collaboration.

- Determining a problem.

- Analyzing the requirements.

- Building and testing the required code.

- The delivery outcome to users.

The waterfall model follows a sequential order. A project development team only moves to the next development phase or testing if the previous step is completed successfully.

Drawbacks:

- Usually, the project takes a long time, and the outcome may no longer match the customer's needs.

- Customers often don't know what they want until they see it or can't explain what they need.

Agile methodology constantly emphasizes:

- adaptive planning and

- early delivery with continual improvement. It encourages rapid and flexible responses to changes as they occur.

Development needs to favor

- individuals and interactions over processes and tools.

- Working software over comprehensive documentation.

- Customer collaboration over contract negotiation.

- Respond to changes over following a plan.

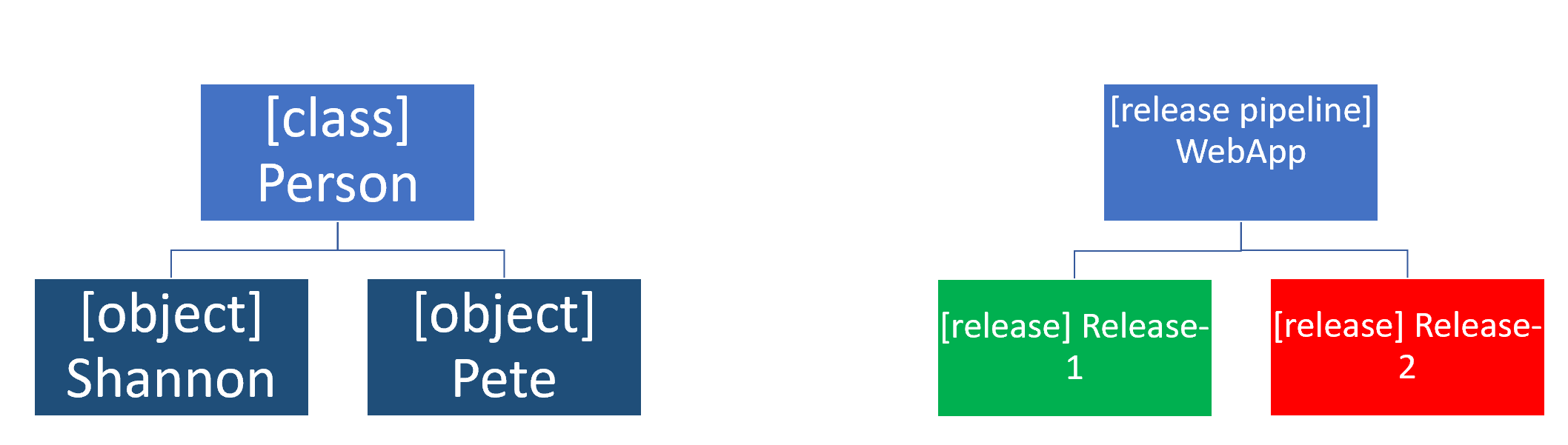

Agile software development methods are based on releases and iterations:

One release might consist of several iterations. Each iteration is like a small independent project. After being estimated and prioritization: Features, bug fixes, enhancements, and refactoring width are assigned to a release. And then assigned again to a specific iteration within the release, generally on a priority basis. At the end of each iteration, there should be tested working code. In each iteration, the team must focus on the outcomes of the previous iteration and learn from them. Having teams focused on shorter-term outcomes is that teams are also less likely to waste time over-engineering features. Or allowing unnecessary scope creep to occur.

Agile software development helps teams keep focused on business outcomes.

| Waterfall | Agile |

|---|---|

| Divided into distinct phases. | Separates the project development lifecycle into sprints. |

| It can be rigid. | Known for flexibility. |

| All project development phases, such as design, development, and test, are completed once. | It follows an iterative development approach so that each phase may appear more than once. |

| Define requirements at the start of the project with little change expected. | Requirements are expected to change and evolve. |

| Focus on completing the project. | Focus on meeting customers' demands. |

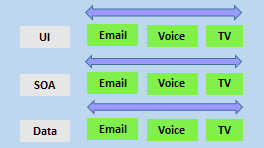

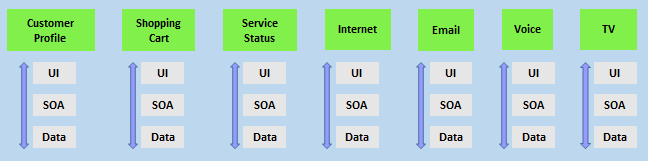

- divide teams according to the software architecture. In this example, the teams have been divided into the user interface, service-oriented architecture, and data teams:

- span the architecture and

- are aligned with skillsets or disciplines

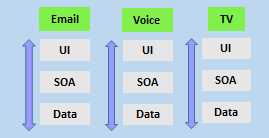

Vertical teams have been shown to provide more good outcomes in Agile projects. Each product must have an identified owner.

Another key benefit of the vertical team structure is that scaling can occur by adding teams. In this example, feature teams have been created rather than just project teams:

For a successful DevOps transformation, the aim is to find team members with the following characteristics:

- They:

- already think there is a need to change.

- have previously shown an ability to innovate.

- are already well respected within the organization.

- have a broad knowledge of the organization and how it operates.

- already believe that DevOps practices are what is needed.

When they first start an agile transformation, many teams hire external coaches or mentors.

Agile coaches help teams or individuals to adopt agile methods or to improve the current techniques and practices.

They must be agents of change by helping people understand how they work and encouraging them to adopt new approaches.

Agile coaches typically work with more than one team and remove any roadblocks from inside or outside the organization.

There is more than one type of agile coach.

- Some coaches are technical experts who aim to show staff members how to apply specific concepts—for example, test-driven development and continuous integration or deployment. These coaches might do peer programming sessions with staff members.

- Other coaches are focused on agile processes, determining requirements, and managing work activities. They might help how to run effective stand-up and review meetings. Some coaches may themselves act as scrum masters. They might mentor staff in how to fill these roles. Over time, though, team members need to develop an ability to mentor each other. Teams should aim to be self-organizing. Team members are often expected to learn as they work and to acquire skills from each other. To make it effective, though, the work itself needs to be done collaboratively, not by individuals working by themselves.

Collaboration tooling Agile teams commonly use the following collaboration tools:

Teams (Microsoft): A group chat application from Microsoft. It provides a combined location with workplace chat, meetings, notes, and storage of file attachments. A user can be a member of many teams.

Slack: A commonly used tool for collaboration in Agile and DevOps teams. From a single interface, it provides a series of separate communication channels that can be organized by project, team, or topic. Conversations are kept and are searchable. It is straightforward to add both internal and external team members. Slack integrates with many third-party tools like GitHub for source code and DropBox for document and file storage.

Jira: A commonly used tool for planning, tracking, releasing, and reporting.

Asana: A standard tool designed to keep team plans, progress, and discussions in a single place. It has strong capabilities around timelines and boards.

Glip: An offering from Ring Central that provides chat, video, and task management.

Other standard tools with collaboration offerings include ProofHub, RedBooth, Trello, DaPulse, and many others.

Not all tools need to be digital tools. Many teams use whiteboards to collaborate on ideas, index cards for recording stories, and sticky notes for moving around tasks.

Even when digital tools are available, it might be more convenient to use these physical tools during stand-up and other meetings.

These tools usually include:

- Project planning and execution monitoring abilities (including how to respond to impediments).

- Automation for stand-up meetings.

- Management and tracking of releases.

- A way to record and work with the outcomes of retrospectives.

Many include Kanban boards and detailed sprint planning options.

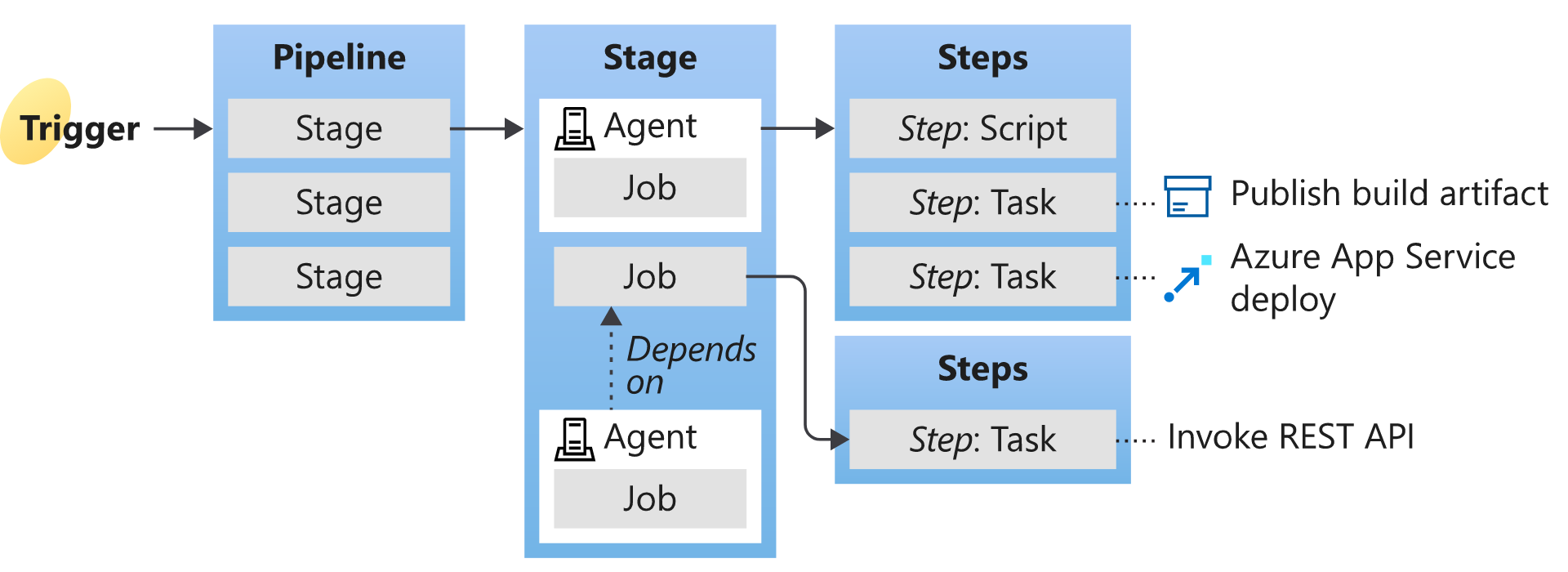

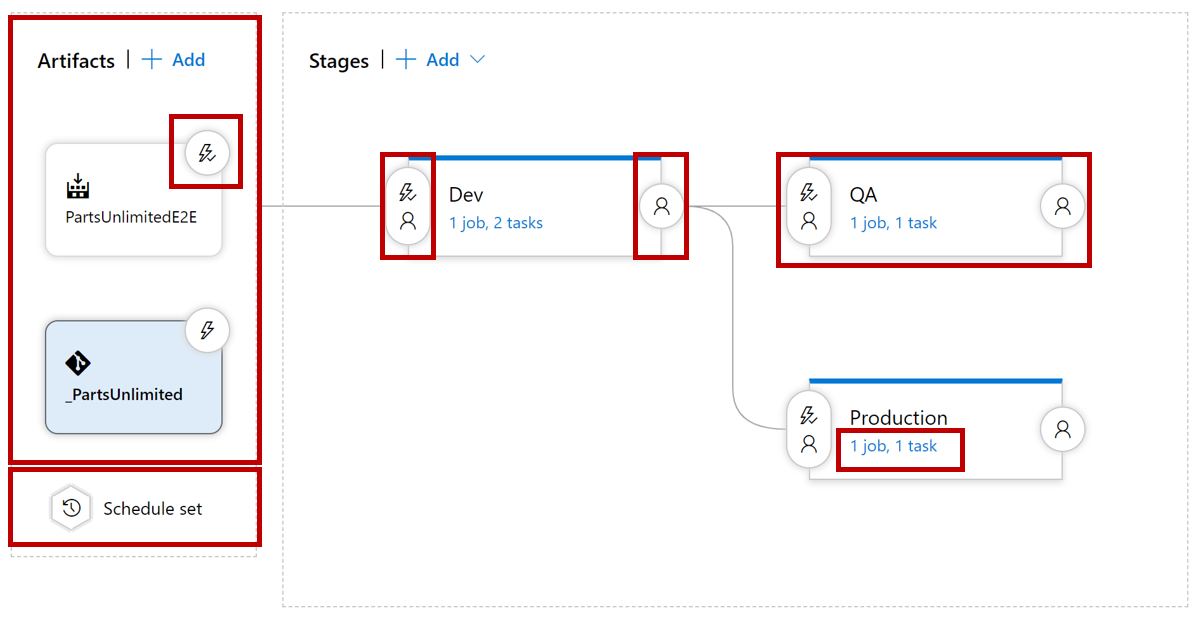

As a complete CI/CD system, we have Azure DevOps and GitHub that includes:

Flexibility in Kanban boards. Traceability through Backlogs. Customizability in dashboards. Built-in scrum boards. Integrability directly with code repositories. Code changes can be linked directly to tasks or bugs.

It might seem odd to add screen recording tools to this list. Still, they are beneficial when:

- Work with remote team members.

- Recording bugs in action.

- Building walkthroughs and tutorials that demonstrate actual or potential features.

A screen recorder is built into Windows, but other common ones include SnagIt, Camtasia, OBS, and Loom.

This module explores Azure DevOps and GitHub tools and helps organizations define their work management tool and licensing strategy.

Azure DevOps is a Software as a service (SaaS) platform from Microsoft that provides an end-to-end DevOps toolchain for developing and deploying software.

Azure DevOps includes a range of services covering the complete development life cycle.

- Azure Boards: agile planning, work item tracking, visualization, and reporting tool.

- Azure Pipelines: a language, platform, and cloud-agnostic CI/CD platform-supporting containers or Kubernetes.

- Azure Repos: provides cloud-hosted private git repos.

- Azure Artifacts: provides integrated package management with support for Maven, npm, Python, and NuGet package feeds from public or private sources.

- Azure Test Plans: provides an integrated planned and exploratory testing solution.

GitHub is a Software as a service (SaaS) platform from Microsoft that provides Git-based repositories and DevOps tooling for developing and deploying software.

GitHub provides a range of services for software development and deployment.

- Codespaces: Provides a cloud-hosted development environment (based on Visual Studio Code) that can be operated from within a browser or external tools. Eases cross-platform development.

- Repos: Public and private repositories based upon industry-standard Git commands.

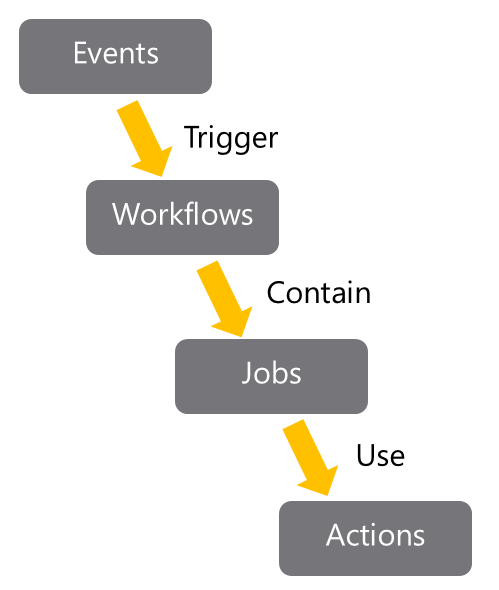

- Actions: Allows for the creation of automation workflows. These workflows can include environment variables and customized scripts.

- Packages: The majority of the world's open-source projects are already contained in GitHub repositories. GitHub makes it easy to integrate with this code and with other third-party offerings.

- Security: Provides detailed code scanning and review features, including automated code review assignment.

To protect and secure your data, you can use:

Microsoft account. GitHub account. Azure Active Directory (Azure AD).

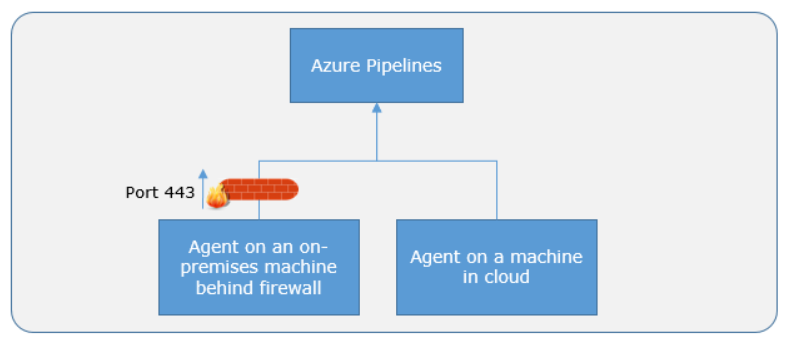

Use personal access tokens (PAT) for tools that don't directly support Microsoft accounts or Azure AD for authentication. You can use it if you want them to integrate with Azure DevOps.

Personal access tokens are also helpful when establishing access to command-line tools, external tools, and tasks in build pipelines.

Azure DevOps is pre-configured with default security groups.

Default permissions are assigned to the default security groups. You can also configure access at the organization, collection, and project or object levels.

In the organization settings in Azure DevOps, you can configure app access policies. Based on your security policies, you might allow alternate authentication methods, enable third-party applications to access via OAuth, or even allow anonymous access to some projects.

For even tighter control, you can use Conditional Access policies. These offer simple ways to help secure resources such as Azure DevOps when using Azure Active Directory for authentication.

Conditional Access policies such as multifactor authentication can help to minimize the risk of compromised credentials.

As part of a Conditional Access policy, you might require:

- Security group membership.

- A location or network identity.

- A specific operating system.

- A managed device or other criteria.

Third-party organizations offer commercial tooling to assist with migrating other work management tools like:

Aha. BugZilla. ClearQuest. And others to Azure DevOps.

Azure Test Plans track manual testing for sprints and milestones, allowing you to follow when that testing is complete.

Azure DevOps also has a Test Feedback extension available in the Visual Studio Marketplace. The extension is used to help teams do exploratory testing and provide feedback.

Other helpful testing tools: Apache JMeter is open-source software written in Java and designed to load test, and measure performance.

Pester is a tool that can automate the testing of PowerShell code.

SoapUI is another testing framework for SOAP and REST testing.

If you are using Microsoft Test Manager, you should plan to migrate to Azure Test Plans instead.

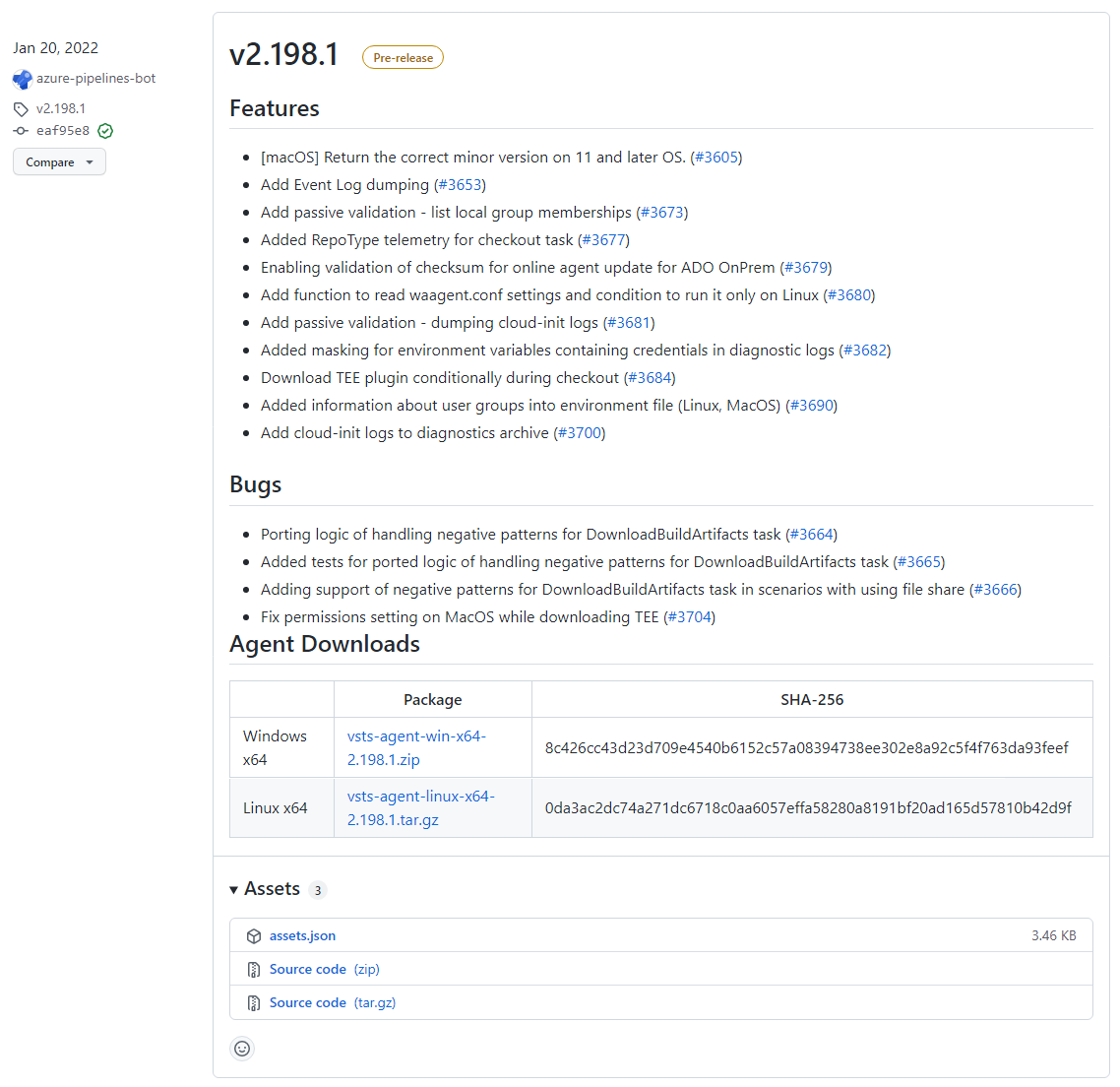

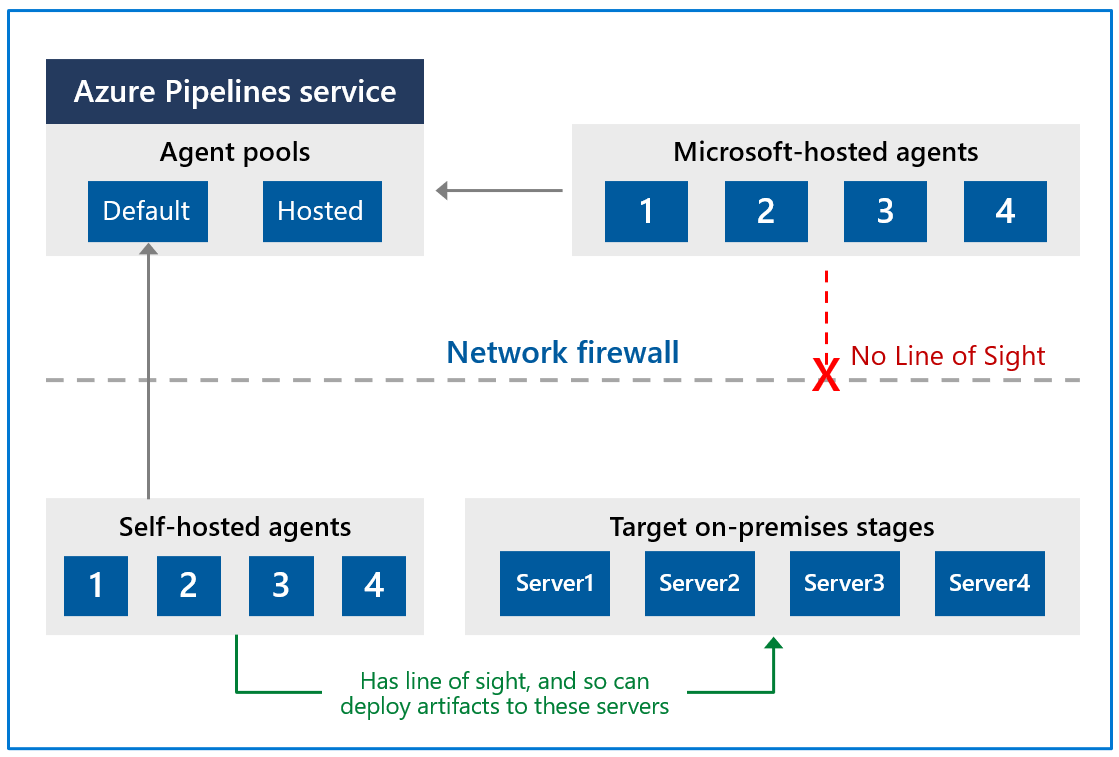

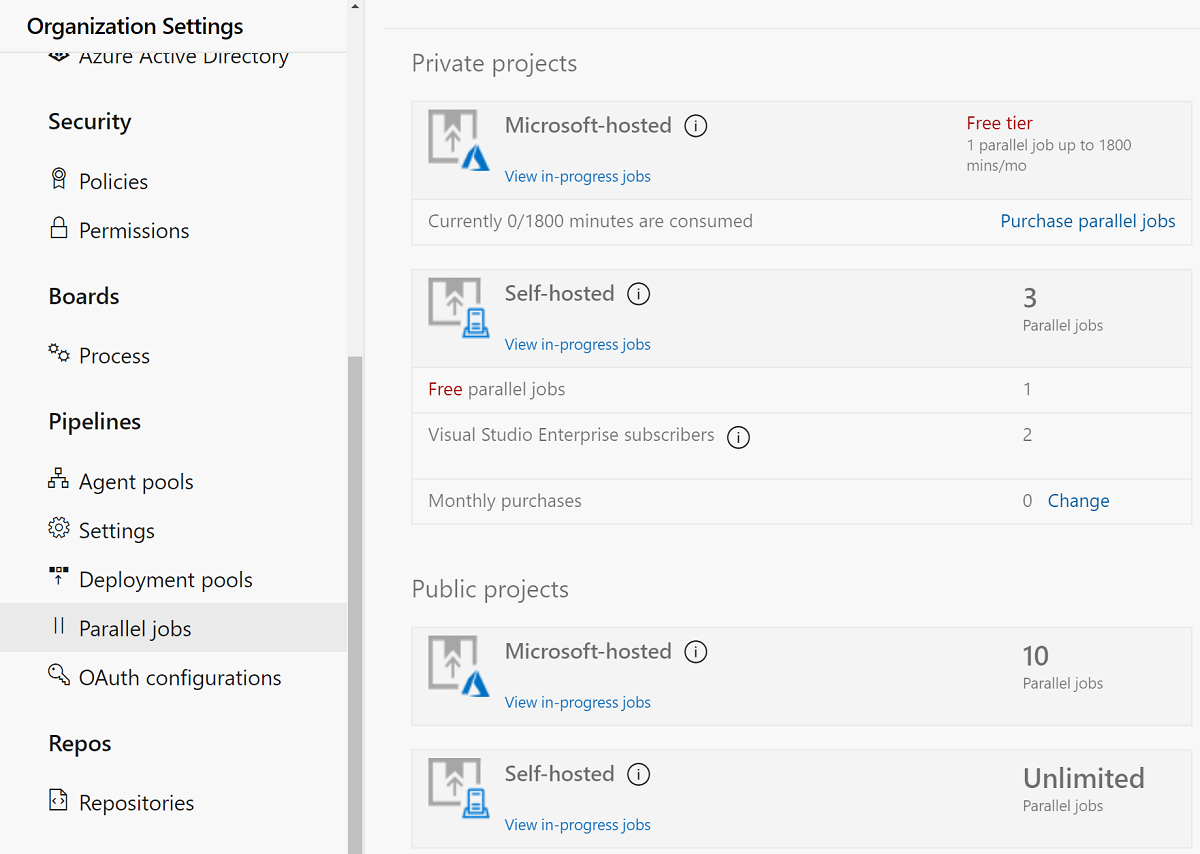

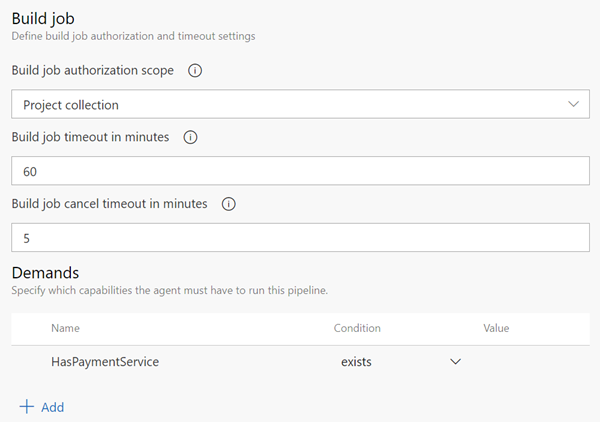

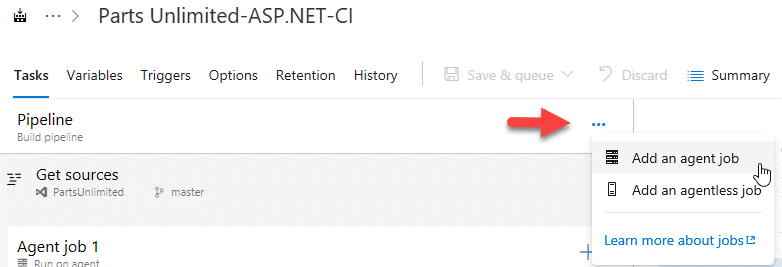

When designing a license management strategy, you first need to understand your progress in the DevOps implementation phase.

If you have a draft of the architecture, you're planning for the DevOps implementation; you already know part of the resources to consume.

For example, you started with a version control-implementing Git and created some pipelines to build and release your code.

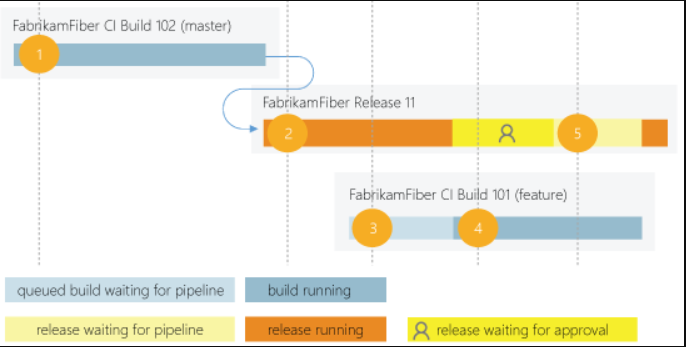

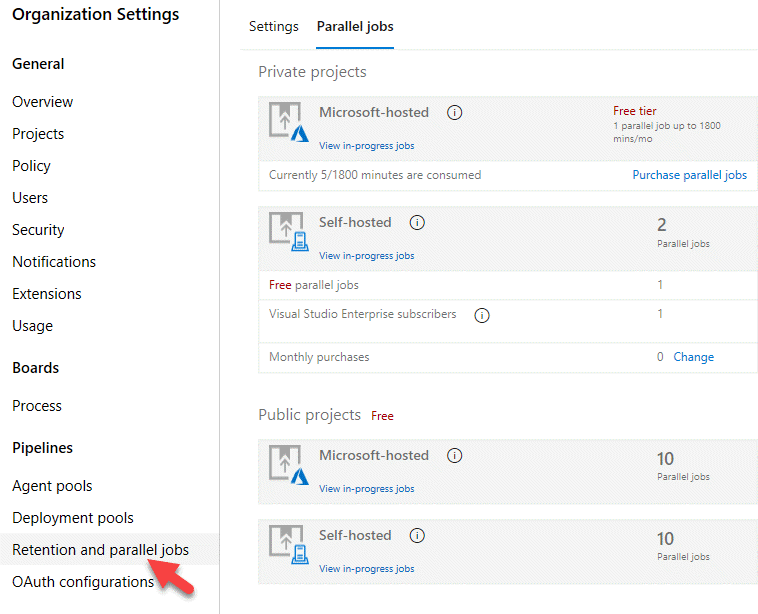

If you have multiple teams building their solutions, you don't want to wait in the queue to start building yours.

Probably, you want to pay for parallel jobs and make your builds run in parallel without depending on the queue availability.

To consider:

What phase are you in? How many people are using the feature? How long are you willing to wait if in the queue for pipelines? Is this urgent? Is this a validation only? Should all users access all features? Are they Stakeholders? Basic users? Do they already have a Visual Studio license? Do you have an advanced Package Management strategy? Maybe you need more space for Artifacts.

This module introduces you to GitHub Projects, GitHub Project Boards and Azure Boards. It explores ways to link Azure Boards and GitHub, configure GitHub Projects and Project views, and manage work with GitHub Projects.

During the application or project lifecycle, it's crucial to plan and prioritize work. With Project boards, you can control specific feature work, roadmaps, release plans, etc.

Project boards are made up of issues, pull requests, and notes categorized as cards you can drag and drop into your chosen columns. The cards contain relevant metadata for issues and pull requests, like labels, assignees, the status, and who opened it.

There are different types of project boards:

User-owned project boards: Can contain issues and pull requests from any personal repository. Organization-wide project boards: Can contain issues and pull requests from any repository that belongs to an organization. Repository project boards: Are scoped to issues and pull requests within a single repository.

| Templates | Description |

|---|---|

| Basic kanban | Track your tasks with: To do, In progress, and Done columns. |

| Automated kanban | Cards automatically move between: To do, In progress, and Done columns. |

| Automated kanban with review | Cards automatically move between: To do, In progress, and Done columns, with extra triggers for pull request review status. |

| Bug triage | Triage and prioritize bugs with: To do, High priority, Low priority, and Closed columns. |

Projects are a new, customizable and flexible tool version of projects for planning and tracking work on GitHub.

A project is a customizable spreadsheet in which you can configure the layout by filtering, sorting, grouping your issues and PRs, and adding custom fields to track metadata.

You can use different views such as Board or spreadsheet/table.

You can use custom fields in your tasks. For example:

A date field to track target ship dates. A number field to track the complexity of a task. A single select field to track whether a task is Low, Medium, or High priority. A text field to add a quick note. An iteration field to plan work week-by-week, including support for breaks.

is a customizable tool to manage software projects supporting Agile, Scrum, and Kanban processes by default. Track work, issues, and code defects associated with your project. Also, you can create your custom process templates and use them to create a better and more customized experience for your company.

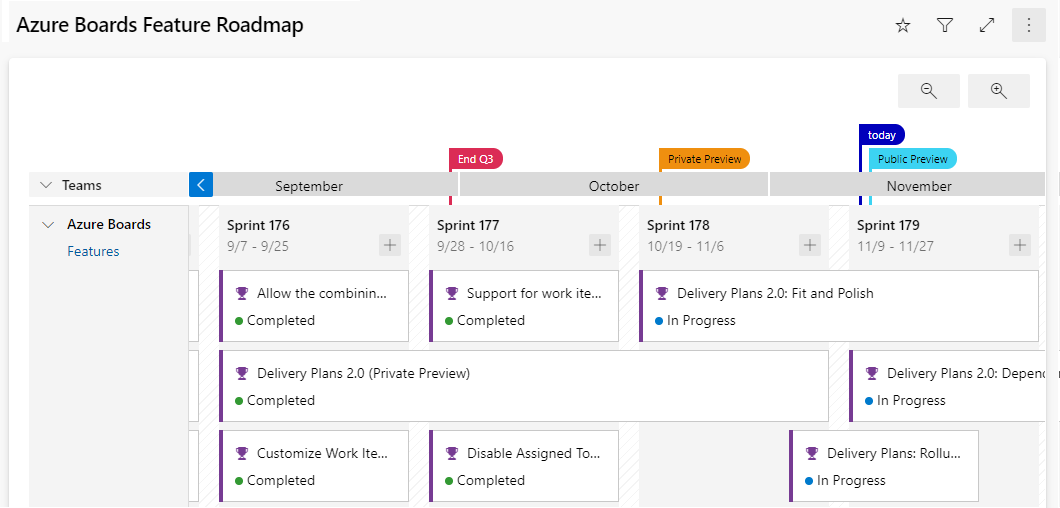

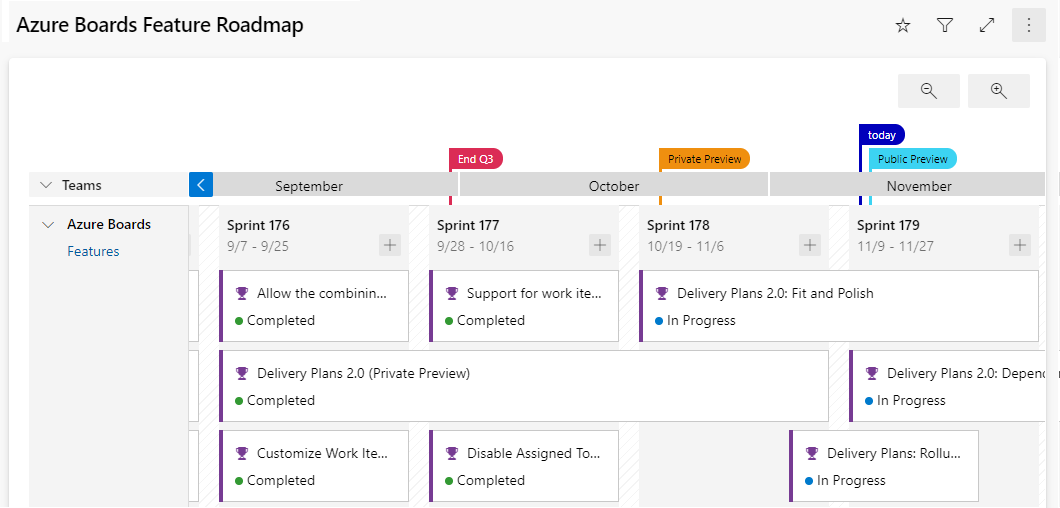

You have multiple features and configurations to support your teams, such as calendar views, configurable dashboards, and integrated reporting.

The Kanban board is one of several tools that allows you to add, update, and filter user stories, bugs, features, and epics.

You can track your work using the default work item types such as user stories, bugs, features, and epics. It's possible to customize these types or create your own. Each work item provides a standard set of system fields and controls, including Discussion for adding and tracking comments, History, Links, and Attachments.

If you need to create reports or a list of work with specific filters, you can use the queries hub to generate custom lists of work items.

Queries support the following tasks:

Find groups of work items with something in common. Triage work to assign to a team member or sprint and set priorities. Perform bulk updates. View dependencies or relationships between work items. Create status and trend charts that you can optionally add to dashboards.

It's possible to create another view with deliverables and track dependencies across several teams in a calendar view using Delivery Plans.

Delivery plans are fully interactive, supporting the following tasks:

View up to 15 team backlogs, including a mix of backlogs and teams from different projects. View custom portfolio backlogs and epics. View work that spans several iterations. Add backlog items from a plan. View rollup progress of features, epics, and other portfolio items. View dependencies that exist between work items.

Use GitHub, track work in Azure Boards Use Azure Boards to plan and track your work and GitHub as source control for software development.

Connect Azure Boards with GitHub repositories, enabling linking GitHub commits, pull requests, and issues to work items in Boards.

Use GitHub, track work in Azure Boards Use Azure Boards to plan and track your work and GitHub as source control for software development.

Connect Azure Boards with GitHub repositories, enabling linking GitHub commits, pull requests, and issues to work items in Boards.

GitHub Command Palette - GitHub Docs

A Source control system (or version control system) allows developers to collaborate on code and track changes. Use version control to save your work and coordinate code changes across your team. Source control is an essential tool for multi-developer projects.

The version control system saves a snapshot of your files (history) so that you can review and even roll back to any version of your code with ease. Also, it helps to resolve conflicts when merging contributions from multiple sources.

For most software teams, the source code is a repository of invaluable knowledge and understanding about the problem domain that the developers have collected and refined through careful effort.

Source control protects source code from catastrophe and the casual degradation of human error and unintended consequences.

Version control is about keeping track of every change to software assets—tracking and managing the who, what, and when. Version control is the first step needed to assure quality at the source, ensure flow and pull value, and focus on the process. All of these create value not just for the software teams but ultimately for the customer.

Version control is a solution for managing and saving changes made to any manually created assets. If changes are made to the source code, you can go back in time and easily roll back to previous-working versions.

Version control also makes experimenting easy and, most importantly, makes collaboration possible. Without version control, collaborating over source code would be a painful operation.

There are several perspectives on version control.

For developers, it's a daily enabler for work and collaboration to happen. It's part of the daily job, one of the most-used tools.

For management, the critical value of version control is in:

IP security. Risk management. Time-to-market speed through Continuous Delivery, where version control is a fundamental enabler.

Source control is the fundamental enabler of continuous delivery."

Some of the advantages of using source control are,

Create workflows. Version control workflows prevent the chaos of everyone using their development process with different and incompatible tools. Version control systems provide process enforcement and permissions, so everyone stays on the same page. Work with versions. Every version has a description in the form of a comment. These descriptions help you follow changes in your code by version instead of by individual file changes. Code stored in versions can be viewed and restored from version control at any time as needed. It makes it easy to base new work on any version of code. Collaboration. Version control synchronizes versions and makes sure that your changes do not conflict with other changes from your team. Your team relies on version control to help resolve and prevent conflicts, even when people make changes simultaneously. Maintains history of changes. Version control keeps a record of changes as your team saves new versions of your code. This history can be reviewed to find out who, why, and when changes were made. The history gives you the confidence to experiment since you can roll back to a previous good version at any time. The history lets your base work from any code version, such as fixing a bug in an earlier release. Automate tasks. Version control automation features save your team time and generate consistent results. Automate testing, code analysis, and deployment when new versions are saved to version control.

Reusability – why do the same thing twice? Reuse of code is a common practice and makes building on existing assets simpler. Traceability – Audits are not just for fun; in many industries, it is a legal matter. All activities must be traced, and managers can produce reports when needed. Traceability also makes debugging and identifying root cause easier. Additionally, it helps with feature reuse as developers can link requirements to implementation. Manageability – Can team leaders define and enforce workflows, review rules, create quality gates and enforce QA throughout the lifecycle? Efficiency – are we using the right resources for the job, minimizing time and effort? This one is self-explanatory. Collaboration – When teams work together, quality tends to improve. We catch one another's mistakes and can build on each other's strengths. Learning – Organizations benefit when they invest in employees learning and growing. It is important for onboarding new team members, the lifelong learning of seasoned members, and the opportunity for workers to contribute to the bottom line and the industry.

- Make small changes. In other words, commit early and commit often. Be careful not to commit any unfinished work that could break the build.

- Do not commit personal files. It could include application settings or SSH keys. Often personal files are committed accidentally but cause problems later when other team members work on the same code.

- Update often and right before pushing to avoid merge conflicts.

- Verify your code change before pushing it to a repository; ensure it compiles and tests are passing.

- Pay close attention to commit messages, as it will tell you why a change was made. Consider committing messages as a mini form of documentation for the change.

- Link code changes to work items. It will concretely link what was created to why it was created—or modified by providing traceability across requirements and code changes.

- No matter your background or preferences, be a team player and follow agreed conventions and workflows. Consistency is essential and helps ensure quality, making it easier for team members to pick up where you left off, review your code, debug, and so on.

This module describes different source control systems, such as Git and Team Foundation Version Control (TFVC), and helps with the initial steps for Git utilization.

Centralized source control systems are based on the idea that there's a single "central" copy of your project somewhere (probably on a server). Programmers will check in (or commit) their changes to this central copy.

"Committing" a change means to record the difference in the central system. Other programmers can then see this change.

Also, it's possible to pull down the change. The version control tool will automatically update the contents of any files that were changed.

Most modern version control systems deal with "changesets," which are a group of changes (possibly too many files) that should be treated as a cohesive whole.

Programmers no longer must keep many copies of files on their hard drives manually. The version control tool can talk to the central copy and retrieve any version they need on the fly.

Some of the most common-centralized version control systems you may have heard of or used are Team Foundation Version Control (TFVC), CVS, Subversion (or SVN), and Perforce.

If working with a centralized source control system, your workflow for adding a new feature or fixing a bug in your project will usually look something like this:

- Get the latest changes other people have made from the central server.

- Make your changes, and make sure they work correctly.

- Check in your changes to the main server so that other programmers can see them.

"Distributed" source control or version control systems (DVCS for short) have become the most important.

The three most popular are:

- Git,

- Mercurial,

- and Bazaar.

These systems don't necessarily rely on a central server to store all the versions of a project's files. Instead, every developer clones a repository copy and has the project's complete history on their local storage. This copy (or "clone") has all the original metadata.

Getting new changes from a repository is called pulling. Moving your changes to a repository is called "pushing. You move changesets (changes to file groups as coherent wholes), not single-file diffs.

The act of cloning an entire repository gives distributed source control tools several advantages over centralized systems:

- Doing actions other than pushing and pulling changesets is fast because the tool only needs to access the local storage, not a remote server.

- Committing new changesets can be done locally without anyone else seeing them. Once you have a group of changesets ready, you can push all of them at once.

- Everything but pushing and pulling can be done without an internet connection. So, you can work on a plane, and you won't be forced to commit several bug fixes as one large changeset.

- Since each programmer has a full copy of the project repository, they can share changes with one, or two other people to get feedback before showing the changes to everyone.

There are only two major inherent disadvantages to using a distributed system:

- If your project contains many large, binary files that can't be efficiently compressed, the space needed to store all versions of these files can accumulate quickly.

- If your project has a long history (50,000 changesets or more), downloading the entire history can take an impractical amount of time and disk space.

Git is a distributed version control system. Each developer has a copy of the source repository on their development system. Developers can commit each set of changes on their dev machine.

Branches are lightweight. When you need to switch contexts, you can create a private local branch. You can quickly switch from one branch to another to pivot among different variations of your codebase. Later, you can merge, publish, or dispose of the branch.

Team Foundation Version Control (TFVC) is a centralized version control system.

Typically, team members have only one version of each file on their dev machines. Historical data is maintained only on the server. Branches are path-based and created on the server.

TFVC has two workflow models:

- Server workspaces - Before making changes, team members publicly check out files. Most operations require developers to be connected to the server. This system helps lock workflows. Other software that works this way includes Visual Source Safe, Perforce, and CVS. You can scale up to huge codebases with millions of files per branch—also, large binary files with server workspaces.

- Local workspaces - Each team member copies the latest codebase version with them and works offline as needed. Developers check in their changes and resolve conflicts as necessary. Another system that works this way is Subversion.

Developers would gain the following benefits by moving to Git:

- Community In many circles, Git has come to be the expected version control system for new projects.

If your team is using Git, odds are you will not have to train new hires on your workflow because they will already be familiar with distributed development.

-

Distributed development In TFVC, each developer gets a working copy that points back to a single central repository. Git, however, is a distributed version control system. Instead of a working copy, each developer gets their local repository, complete with an entire history of commits.

-

Trunk-based development One of the most significant advantages of Git is its branching capabilities. Unlike centralized version control systems, Git branches are cheap and easy to merge.

Trunk-based development provides an isolated environment for every change to your codebase. When developers want to start working on something—no matter how large or small—they create a new branch. It ensures that the main branch always contains production-quality code.

Using trunk-based development is more reliable than directly-editing production code, but it also provides organizational benefits.

They let you represent development work at the same granularity as your agile backlog.

For example, you might implement a policy where each work item is addressed in its feature branch.

-

Pull requests A pull request is a way to ask another developer to merge one of your branches into their repository.

It makes it easier for project leads to keep track of changes and lets developers start discussions around their work before integrating it with the rest of the codebase.

Since they are essentially a comment thread attached to a feature branch, pull requests are incredibly versatile.

When a developer gets stuck with a complex problem, they can open a pull request to ask for help from the rest of the team.

Instead, junior developers can be confident that they are not destroying the entire project by treating pull requests as a formal code review.

-

Faster release cycle A faster release cycle is the ultimate result of feature branches, distributed development, pull requests, and a stable community.

These capabilities promote an agile workflow where developers are encouraged to share more minor changes more frequently.

In turn, changes can get pushed down the deployment pipeline faster than the standard of the monolithic releases with centralized version control systems.

As you might expect, Git works well with continuous integration and continuous delivery environments.

Git hooks allow you to run scripts when certain events occur inside a repository, which lets you automate deployment to your heart’s content.

You can even build or deploy code from specific branches to different servers.

For example, you might want to configure Git to deploy the most recent commit from the develop branch to a test server whenever anyone merges a pull request into it.

Combining this kind of build automation with peer review means you have the highest possible confidence in your code as it moves from development to staging to production.

There are three common objections I often hear to migrating to Git:

-

I can overwrite history.

-

I have large files.

-

There is a steep learning curve.

-

Overwriting history Git technically does allow you to overwrite history - but like any helpful feature, if misused can cause conflicts.

If your teams are careful, they should never have to overwrite history.

If you are synchronizing to Azure Repos, you can also add a security rule that prevents developers from overwriting history by using the explicit "Force Push" permissions.

Every source control system works best when developers understand how it works and which conventions work.

While you cannot overwrite history with Team Foundation Version Control (TFVC), you can still overwrite code and do other painful things.

- Large files Git works best with repos that are small and do not contain large files (or binaries).

Every time you (or your build machines) clone the repo, they get the entire repo with its history from the first commit.

It is great for most situations but can be frustrating if you have large files.

Binary files are even worse because Git cannot optimize how they are stored.

That is why Git LFS was created.

It lets you separate large files of your repos and still has all the benefits of versioning and comparing.

Also, if you are used to storing compiled binaries in your source repos, stop!

Use Azure Artifacts or some other package management tool to store binaries for which you have source code.

However, teams with large files (like 3D models or other assets) can use Git LFS to keep the code repo slim and trimmed.

- Learning curve There is a learning curve. If you have never used source control before, you are probably better off when learning Git. I have found that users of centralized source control (TFVC or SubVersion) battle initially to make the mental shift, especially around branches and synchronizing.

Once developers understand how Git branches work and get over the fact that they must commit and then push, they have all the basics they need to succeed in Git.

Azure Repos is a set of version control tools that you can use to manage your code.

Azure Repos provides two types of version control:

- Git: distributed version control

- Team Foundation Version Control (TFVC): centralized version control

- Use free private Git repositories, pull requests, and code search: Get unlimited private Git repository hosting and support for TFVC that scales from a hobby project to the world’s largest repository.

- Support for any Git client: Securely connect with and push code into your Git repository from any IDE, editor, or Git client.

- Web hooks and API integration: Add validations and extensions from the marketplace or build your own-using web hooks and REST APIs.

- Semantic code search: Quickly find what you are looking for with a code-aware search that understands classes and variables.

- Collab to build better code: Do more effective Git code reviews with threaded discussion and continuous integration for each change. Use forks to promote collaboration with inner source workflows.

- Automation with built-in CI/CD: Set up continuous integration/continuous delivery (CI/CD) to automatically trigger builds, tests, and deployments. Including every completed pull request using Azure Pipelines or your tools.

- Protection of your code quality with branch policies: Keep code quality high by requiring code reviewer sign-out, successful builds, and passing tests before merging pull requests. Customize your branch policies to maintain your team’s high standards.

- Usage of your favorite tools: Use Git and TFVC repositories on Azure Repos with your favorite editor and IDE.

GitHub is a Git repository hosting service that adds many of its features.

GitHub is a Git repository hosting service that adds many of its features.

While Git is a command-line tool, GitHub provides a Web-based graphical interface.

It also provides access control and several collaboration features, such as wikis and essential task management tools for every project.

The process is simple:

- Create an empty Git repo (or multiple empty repos).

- Get-latest from TFS.

- Copy/reorganize the code into the empty Git repos.

- Commit and push, and you are there!

If you have shared code, you need to create builds of the shared code to publish to a package feed. And then consume those packages in downstream applications.

If you are on TFVC and in Azure DevOps, you have the option of a simple single-branch import.

- Click on the Import repository from the Azure Repos top-level drop-down menu to open the dialog. - Then enter the path to the branch you are migrating to (yes, you can only choose one branch).

- Select if you want history or not (up to 180 days).

- Add in a name for the repo, and the import will be triggered.

If you need to migrate more than a single branch and keep branch relationships

It's an open-source project built to synchronize Git and TFVC repositories.

GIT-TFS has the advantage that it can migrate multiple branches and preserve the relationships to merge branches in Git after you migrate.

You can quickly dry-run the migration locally, iron out any issues, and then do it for real. There are lots of flexibilities with this tool.

If you are on Subversion, you can use GIT-SVN to import your Subversion repo similarly to GIT-TFS.

If Chocolatey is already installed on your computer, run choco install gittfs

Add the GIT-TFS folder path to your PATH. You could also set it temporary (the time of your current terminal session) using: set PATH=%PATH%;%cd%\GitTfs\bin\Debug

You need .NET 4.5.2 and maybe the 2012 or 2013 version of Team Explorer (or Visual Studio). It depends on the version of Azure DevOps you want to target.

Clone the whole repository (wait for a while.) :

git tfs clone http://tfs:8080/tfs/DefaultCollection $/some_project

Codespaces is a cloud-based development environment that GitHub hosts. It is essentially an online implementation of Visual Studio Code.

Codespaces allows developers to work entirely in the cloud.

Codespaces even will enable developers to contribute from tablets and Chromebooks.

Because it is based on Visual Studio Code, the development environment is still rich with:

- Syntax highlighting.

- Autocomplete.

- Integrated debugging.

- Direct Git integration. Developers can create a codespace (or multiple codespaces) for a repository. Each codespace is associated with a specific branch of a repository.

A repository is simply a place where the history of your work is stored.

It often lives in a .git subdirectory of your working copy.

There are two philosophies on organizing your repos: Monorepo or multiple repos.

-

Monorepos is a source control pattern where all the source code is kept in a single repository. It's super simple to give all your employees access to everything in one shot. Just clone it down, and done.

-

Multiple repositories refer to organizing your projects into their separate repository.

The multiple repos view, in extreme form, is that if you let every subteam live in its repo. They have the flexibility to work in their area however they want, using whatever libraries, tools, development workflow, and so on, will maximize their productivity.

The cost is that anything not developed within a given repo must be consumed as if it was a third-party library or service. It would be the same even if it were written by someone sitting one desk over.

If you find a bug in your library, you must fix it in the appropriate repo. Get a new artifact published, and then return to your repo to change your code. In the other repo, you must deal with a different code base, various libraries, tools, or even a different workflow. Or maybe you must ask someone who owns that system to make the change for you and wait for them to get around to it.

In Azure DevOps, a project can contain multiple repositories. It's common to use one repository for each associated solution.

A changelog is a file that has a list of changes made to a project, usually in date order. The typical breakdown is to separate a list of versions, and then within each version, show:

- Added features

- Modified/Improved features

- Deleted features

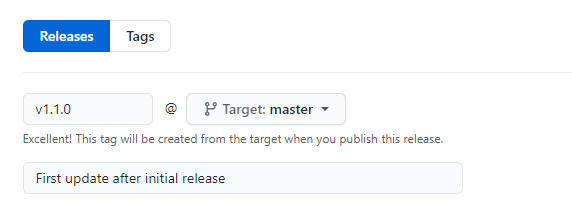

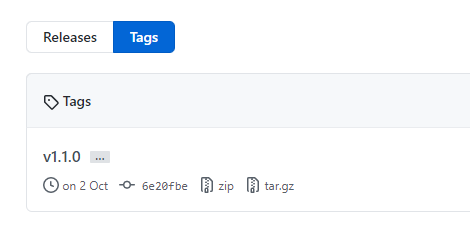

Some teams will post changelogs as blog posts; others will create a CHANGELOG.md file in a GitHub repository.

The fundamental difference between the monorepo and multiple repos philosophies boils down to a difference about what will allow teams working together on a system to go fastest.

-

Using native GitHub commands The git log command can be useful for automatically creating content.

Example: create a new section per version:

git log [options] vX.X.X..vX.X.Y | helper-script > projectchangelogs/X.X.Ybash -

Git changelog - based on Python.

-

GitHub changelog generator - based on Gem.

When evaluating a workflow for your team, you must consider your team's culture. You want the workflow to:

-

enhance your team's effectiveness and

-

not be a burden that limits productivity. Some things to consider when evaluating a Git workflow are:

-

Does this workflow scale with team size?

-

Is it easy to undo mistakes and errors with this workflow?

-

Does this workflow impose any new unnecessary cognitive overhead on the team?

Most popular Git workflows will have some sort of centralized repo that individual developers will push and pull from.

Below is a list of some popular Git workflows

These comprehensive workflows offer more specialized patterns about managing branches for feature development, hotfixes, and eventual release.

-

Trunk-based development

Trunk-based development is a logical extension of Centralized Workflow.

The core idea behind the Feature Branch Workflow is that all feature development should take place in a dedicated branch instead of the main branch.

This encapsulation makes it easy for multiple developers to work on a particular feature without disturbing the main codebase.

It also means the main branch should never contain broken code, which is a huge advantage for continuous integration environments.

Encapsulating feature development also makes it possible to use pull requests, which are a way to start discussions around a branch. They allow other developers to sign out on a feature before it gets integrated into the official project. Or, if you get stuck in the middle of a feature, you can open a pull request asking for suggestions from your colleagues.

Pull requests make it incredibly easy for your team to comment on each other's work. Also, feature branches can (and should) be pushed to the central repository. It allows sharing a feature with other developers without touching any official code.

Since the main is the only "special" branch, storing several feature branches on the central repository doesn't pose any problems. It's also a convenient way to back up everybody's local commits.

The trunk-based development Workflow assumes a central repository, and the main represents the official project history.

Instead of committing directly to their local main branch, developers create a new branch every time they start work on a new feature.

Feature branches should have descriptive names, like new-banner-images or bug-91. The idea is to give each branch a clear, highly focused purpose.

Git makes no technical distinction between the main and feature branches, so developers can edit, stage, and commit changes to a feature branch.

-

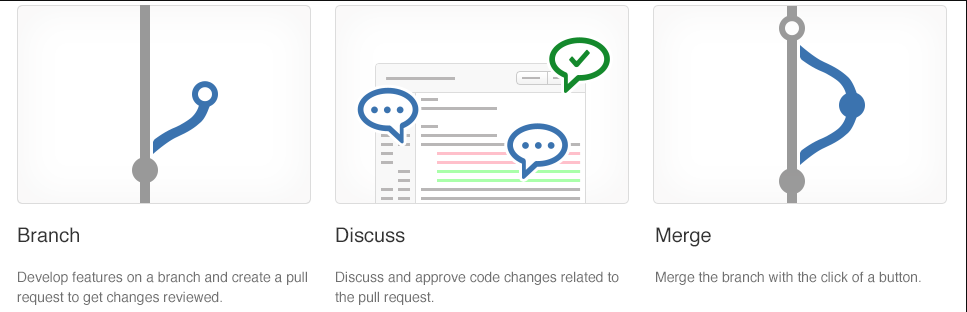

Create a branch When you create a branch in your project, you're creating an environment where you can try out new ideas.

Changes you make on a branch don't affect the main branch, so you're free to experiment and commit changes, safe in the knowledge that your branch won't be merged until it's ready to be reviewed by someone you're collaborating with.

Your branch name should be descriptive (for example, refactor-authentication, user-content-cache-key, make-retina-avatars) so that others can see what is being worked on.

-

Add commits Whenever you add, edit, or delete a file, you're making a commit and adding them to your branch.

This process of adding commits keeps track of your progress as you work on a feature branch.

Commits also create a transparent history of your work that others can follow to understand what you've done and why.

Each commit has an associated commit message, which explains why a particular change was made.

Furthermore, each commit is considered a separate unit of change. It lets you roll back changes if a bug is found or you decide to head in a different direction.

Commit messages are essential, especially since Git tracks your changes and then displays them as commits once pushed to the server.

By writing clear commit messages, you can make it easier for other people to follow along and provide feedback.

-

Open a pull request The Pull Requests start a discussion about your commits. Because they're tightly integrated with the underlying Git repository, anyone can see exactly what changes would be merged if they accept your request.

You can open a Pull Request at any point during the development process when:

- You've little or no code but want to share some screenshots or general ideas.

- You're stuck and need help or advice.

- You're ready for someone to review your work. Using the @mention system in your Pull Request message, you can ask for feedback from specific people or teams.

Pull Requests help contribute to projects and for managing changes to shared repositories.

If you're using a Fork & Pull Model, Pull Requests provide a way to notify project maintainers about the changes you'd like them to consider.

If you're using a Shared Repository Model, Pull Requests help start code review and conversation about proposed changes before they're merged into the main branch.

-

Discuss and review your code

-

Deploy With Git, you can deploy from a branch for final testing in an environment before merging to the main.

Once your pull request has been reviewed and the branch passes your tests, you can deploy your changes to verify them. You can roll it back if your branch causes issues by deploying the existing main.

-

Merge Once your changes have been verified, it's time to merge your code into the main branch.

Once merged, Pull Requests preserve a record of the historical changes to your code. Because they're searchable, they let anyone go back in time to understand why and how a decision was made.

By incorporating specific keywords into the text of your Pull Request, you can associate issues with code. When your Pull Request is merged, the related issues can also close.

This workflow helps organize and track branches focused on business domain feature sets.

Other Git workflows, like the Git Forking Workflow and the Gitflow Workflow, are repo-focused and can use the Git Feature Branch Workflow to manage their branching models.

-

-

Forking workflow The Forking Workflow is fundamentally different than the other workflows.

Instead of using a single server-side repository to act as the "central" codebase, it gives every developer a server-side repository.

It means that each contributor has two Git repositories:

- A private local one.

- A public server-side one

Let's cover the principles of what we suggest:

-

The main branch:

- The main branch is the only way to release anything to production.

- The main branch should always be in a ready-to-release state.

- Protect the main branch with branch policies.

- Any changes to the main branch flow through pull requests only.

- Tag all releases in the main branch with Git tags.

-

The feature branch:

- Use feature branches for all new features and bug fixes.

- Use feature flags to manage long-running feature branches.

- Changes from feature branches to the main only flow through pull requests.

- Name your feature to reflect its purpose.

bugfix/description

features/feature-name

features/feature-area/feature-name

hotfix/description

users/username/description

users/username/workitem-

Pull requests:

- Review and merge code with pull requests.

- Automate what you inspect and validate as part of pull requests.

- Tracks pull request completion duration and set goals to reduce the time it takes.

This module presents pull requests for collaboration and code reviews using Azure DevOps and GitHub mobile for pull request approvals.

It helps understand how pull requests work and how to configure them.

Pull requests let you tell others about changes you've pushed to a GitHub repository.

Once a pull request is sent, interested parties can review the set of changes, discuss potential modifications, and even push follow-up commits if necessary.

Pull requests are commonly used by teams and organizations collaborating using the Shared Repository Model.

Everyone shares a single repository, and topic branches are used to develop features and isolate changes.

Many open-source projects on GitHub use pull requests to manage changes from contributors.

They help provide a way to notify project maintainers about changes one has made.

Also, start code review and general discussion about a set of changes before being merged into the main branch.

Pull requests combine the review and merge of your code into a single collaborative process.

Once you're done fixing a bug or new feature in a branch, create a new pull request.

Add the team members to the pull request so they can review and vote on your changes.

Use pull requests to review works in progress and get early feedback on changes.

There's no commitment to merge the changes as the owner can abandon the pull request at any time.

The common benefits of collaborating with pull requests are:

- Get your code reviewed,

- Give great feedback, and

- Protect branches with policies.

Branch policies give you a set of out-of-the-box policies that can be applied to the branches on the server.

Any changes being pushed to the server branches need to follow these policies before the changes can be accepted.

Policies are a great way to enforce your team's code quality and change-management standards.

The out-of-the-box branch policies include several policies, such as:

- build validation and

- enforcing a merge strategy.

Only focus on the branch policies needed to set up a code-review workflow in this recipe.

-

Open the branches view for the myWebApp Git repository in the parts-unlimited team portal. Select the main branch, and from the pull-down, context menu choose Branch policies

-

In the policies view, It presents out-of-the-box policies. Set the minimum number of reviewers to 1.

The Allow requestors to approve their own changes option allows the submitter to self-approve their changes.

It's OK for mature teams, where branch policies are used as a reminder for the checks that need to be performed by the individual.

- Use the review policy with the comment-resolution policy. It allows you to enforce that the code review comments are resolved before the changes are accepted. The requester can take the feedback from the comment and create a new work item and resolve the changes. It at least guarantees that code review comments aren't lost with the acceptance of the code into the main branch:

This module examines technical debt, complexity, quality metrics, and plans for effective code reviews and code quality validation.

There are five key traits to measure for higher quality.

- Reliability

- measures the probability that a system will run without failure over a specific period of operation.

- It relates to the number of defects and availability of the software.

- Several defects can be measured by running a static analysis tool. Software availability can be measured using the mean time between failures (MTBF).

Low defect counts are crucial for developing a reliable codebase.

-

Maintainability

- measures how easily software can be maintained. It relates to the codebase's size, consistency, structure, and complexity. Ensuring maintainable source code relies on several factors, such as testability and understandability. Some metrics you may consider to improve maintainability are the number of stylistic warni ngs and Halstead complexity measures. Both automation and human reviewers are essential for developing maintainable codebases.

-

Testability

- Testability measures how well the software supports testing efforts.

- It relies on how well you can control, observe, isolate, and automate testing, among other factors.

- can be measured based on how many test cases you need to find potential faults in the system.

- The size and complexity of the software can impact testability.

- applying methods at the code level such as cyclomatic complexity can help you improve the testability of the component.

-

Portability

- measures how usable the same software is in different environments. It relates to platform independence.

- There are several ways you can ensure portable code.

- It's essential to regularly test code on different platforms rather than waiting until the end of development.

- It's also good to:

- set your compiler warning levels as high as possible and

- use at least two compilers.

- Enforcing a coding standard also helps with portability.

-

Reusability

- measures whether existing assets—such as code—can be used again.

- Assets are more easily reused if they have modularity or loose coupling characteristics.

- The number of interdependencies can measure reusability.

- Running a static analyzer can help you identify these interdependencies.

Complexity metrics can help in measuring quality.

Another way to understand quality is through calculating Halstead complexity measures.

Cyclomatic complexity measures the number of linearly independent paths through a program's source code.

This measure:

- Program vocabulary.

- Program length.

- Calculated program length.

- Volume.

- Difficulty.

- Effort.

The following is a list of metrics that directly relate to the quality of the code being produced and the build and deployment processes.

- Failed builds percentage - Overall, what percentage of builds are failing?

- Failed deployments percentage - Overall, what percentage of deployments are failing?

- Ticket volume - What is the overall volume of customer or bug tickets?

- Bug bounce percentage - What percentage of customer or bug tickets are reopened?

- Unplanned work percentage - What percentage of the overall work is unplanned?

Technical debt is a term that describes the future cost that will be incurred by choosing an easy solution today instead of using better practices because they would take longer to complete.

So, how does it happen?

The most common excuse is tight deadlines.

There are many causes. For example, there might be a lack of technical skills and maturity among the developers or no clear product ownership or direction.

The organization might not have coding standards at all. So, the developers didn't even know what they should be producing. The developers might not have precise requirements to target. They might be subject to last-minute requirement changes.

Necessary-refactoring work might be delayed. There might not be any code quality testing, manual or automated. In the end, it just makes it harder and harder to deliver value to customers in a reasonable time frame and at a reasonable cost.

- Lack of coding style and standards.

- Lack of or poor design of unit test cases.

- Ignoring or not understanding object oriented design principles.

- Monolithic classes and code libraries.

- Poorly envisioned the use of technology, architecture, and approach. (Forgetting that all system attributes, affecting maintenance, user experience, scalability, and others, need to be considered).

- Over-engineering code (adding or creating code that isn't required, adding custom code when existing libraries are sufficient, or creating layers or components that aren't needed).

- Insufficient comments and documentation.

- Not writing self-documenting code (including class, method, and variable names that are descriptive or indicate intent). Taking shortcuts to meet deadlines. Leaving dead code in place.

One key way to minimize the constant acquisition of technical debt is to use automated testing and assessment.

Look at one of the common tools used to assess the debt: SonarCloud.

-

For .NET developers, a common tool is NDepend. NDepend is a Visual Studio extension that assesses the amount of technical debt a developer has added during a recent development period, typically in the last hour.

-

NDepend lets you create code rules expressed as C# LINQ queries, but it has many built-in rules that detect a wide range of code smells.

-

Resharper Code Quality Analysis Resharper can make a code quality analysis from the command line. Also, be set to fail builds when code quality issues are found automatically.

Rules can be configured for enforcement across teams.

The organizational culture must let all involved feel that the code reviews are more like mentoring sessions where ideas about improving code are shared than interrogation sessions where the aim is to identify problems and blame the author.

The knowledge-sharing that can occur in mentoring-style sessions can be one of the most important outcomes of the code review process. It often happens best in small groups (even two people) rather than in large team meetings. And it's important to highlight what has been done well, not just what needs improvement.

This module describes Git hooks and their usage during the development process, implementation, and behavior.

Git hooks are a mechanism that allows code to be run before or after certain Git lifecycle events.

For example, one could hook into the commit-msg event to validate that the commit message structure follows the recommended format.

The hooks can be any executable code, including shell, PowerShell, Python, or other scripts. Or they may be a binary executable. Anything goes!

The only criteria are that hooks must be stored in the .git/hooks folder in the repo root. Also, they must be named to match the related events (Git 2.x):

- applypatch-msg

- pre-applypatch

- post-applypatch

- pre-commit

- prepare-commit-msg

- commit-msg

- post-commit

- pre-rebase

- post-checkout

- post-merge

- pre-receive

- update

- post-receive

- post-update

- pre-auto-gc

- post-rewrite

- pre-push

Some examples of where you can use hooks to enforce policies, ensure consistency, and control your environment:

- In Enforcing preconditions for merging

- Verifying work Item ID association in your commit message

- Preventing you & your team from committing faulty code

- Sending notifications to your team's chat room (Teams, Slack, HipChat, etc.)

Let's start by exploring client-side Git hooks. Navigate to the repo .git\hooks directory – you'll find that there are a bunch of samples, but they're disabled by default.

Note If you open that folder, you'll find a file called precommit.sample. To enable it, rename it to pre-commit by removing the .sample extension and making the script executable.

The script is found and executed when you attempt to commit using git commit. You commit successfully if your pre-commit script exits with a 0 (zero). Otherwise, the commit fails. If you're using Windows, simply renaming the file won't work.

Git will fail to find the shell in the chosen path specified in the script.

The problem is lurking in the first line of the script, the shebang declaration:

#!/bin/sh

On Unix-like OSs, the #! Tells the program loader that it's a script to be interpreted, and /bin/sh is the path to the interpreter you want to use, sh in this case.

Windows isn't a Unix-like OS. Git for Windows supports Bash commands and shell scripts via Cygwin.

By default, what does it find when it looks for sh.exe at /bin/sh?

Nothing, nothing at all. Fix it by providing the path to the sh executable on your system. It's using the 64-bit version of Git for Windows, so the baseline looks like this:

#!C:/Program\ Files/Git/usr/bin/sh.exe

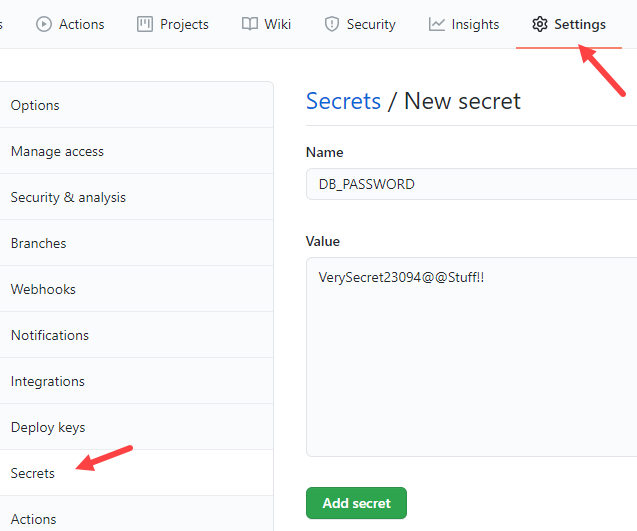

How could Git hooks stop you from accidentally leaking Amazon AWS access keys to GitHub?

You can invoke a script at pre-commit.

Using Git hooks to scan the increment of code being committed into your local repository for specific keywords:

Replace the code in this pre-commit shell file with the following code.

#!C:/Program\ Files/Git/usr/bin/sh.exe

matches=$(git diff-index --patch HEAD | grep '^+' | grep -Pi 'password|keyword2|keyword3')

if [ ! -z "$matches" ]

then

cat <<\EOT

Error: Words from the blocked list were present in the diff:

EOT

echo $matches

exit 1

fiYou don't have to build the complete keyword scan list in this script.

You can branch off to a different file by referring to it here to encrypt or scramble if you want to.

The Git diff-index identifies the code increment committed in the script. This increment is then compared against the list of specified keywords. If any matches are found, an error is raised to block the commit; the script returns an error message with the list of matches. The pre-commit script doesn't return 0 (zero), which means the commit fails.

The repo .git\hooks folder isn't committed into source control. You may wonder how you share the goodness of the automated scripts you create with the team.

The good news is that, from Git version 2.9, you can now map Git hooks to a folder that can be committed into source control.

You could do that by updating the global settings configuration for your Git repository:

Git config --global core.hooksPath '~/.githooks'

If you ever need to overwrite the Git hooks you have set up on the client-side, you can do so by using the no-verify switch:

Git commit --no-verify

So far, we've looked at the client-side Git Hooks on Windows. Azure Repos also exposes server-side hooks. Azure DevOps uses the exact mechanism itself to create Pull requests. You can read more about it at the Server hooks event reference.

This module explains how to use Git to foster inner sources across the organization, implement Fork and its workflows.

The fork-based pull request workflow is popular with open-source projects because it allows anybody to contribute to a project.

You don't need to be an existing contributor or write access to a project to offer your changes.

This workflow isn't just for open source: forks also help support inner source workflows within your company.

Before forks, you could contribute to a project-using Pull Requests.

The workflow is simple enough:

- push a new branch up to your repository,

- open a pull request to get a code review from your team, and

- have Azure Repos evaluate your branch policies.

- You can click one button to merge your pull request into main and deploy when your code is approved.

This workflow is great for working on your projects with your team. But what if you notice a simple bug in a different project within your company and you want to fix it yourself?

What if you're going to add a feature to a project that you use, but another team develops?

It's where forks come in; forks are at the heart of inner source practices.

Inner source – sometimes called internal open source – brings all the benefits of open-source software development inside your firewall.

It opens your software development processes so that your developers can easily collaborate on projects across your company.

It uses the same processes that are popular throughout the open-source software communities.

But it keeps your code safe and secure within your organization.

Microsoft uses the inner source approach heavily.

As part of the efforts to standardize a one-engineering system throughout the company – backed by Azure Repos – Microsoft has also opened the source code to all our projects to everyone within the company.

Before the move to the inner source, Microsoft was "siloed": only engineers working on Windows could read the Windows source code.

Only developers working on Office could look at the Office source code.

So, if you're an engineer working on Visual Studio and you thought that you found a bug in Windows or Office – or wanted to add a new feature – you're out of luck.

But by moving to offer inner sources throughout the company, powered by Azure Repos, it's easy to fork a repository to contribute back.

As an individual making the change, you don't need to write access to the original repository, just the ability to read it and create a fork.

A fork is a complete copy of a repository, including all files, commits, and (optionally) branches. Fork

- allows you to experiment with changes without affecting the original project freely.

- are used to propose changes to someone else's project. Or use someone else's project as a starting point for your idea.

- are a great way to support an Inner Source workflow You can create a fork to suggest changes when you don't have permission to write to the original project directly. Once you're ready to share those changes, it's easy to contribute them back-using pull requests.

A fork starts with all the contents of its upstream (original) repository.

You can include all branches or limit them to only the default branch when you create a fork.

None of the permissions, policies, or build pipelines are applied.

The new fork acts as if someone cloned the original repository, then pushed it to a new, empty repository.

After a fork has been created, new files, folders, and branches aren't shared between the repositories unless a Pull Request (PR) carries them along.

You can create PRs in either direction:

- from fork to upstream or

- upstream to fork.

The most common approach will be from fork to upstream.

The destination repository's permissions, policies, builds, and work items will apply to the PR.

For a small team (2-5 developers), we recommend working in a single repo.

Everyone should work in a topic branch, and the main should be protected with branch policies.

As your team grows more significant, you may find yourself outgrowing this arrangement and prefer to switch to a forking workflow.

We recommend the forking workflow if your repository has many casual or infrequent committees (like an open-source project).

Typically, only core contributors to your project have direct commit rights into your repository.

It would help if you asked collaborators from outside this core set of people to work from a fork of the repository.

Also, it will isolate their changes from yours until you've had a chance to vet the work.

- Create a fork.

- Clone it locally.

- Make your changes locally and push them to a branch.

- Create and complete a PR to upstream. Sync your fork to the latest from upstream.

- Navigate to the repository to fork and choose fork.

- Specify a name and choose the project where you want the fork to be created. If the repository contains many topic branches, we recommend you fork only the default branch.

- Choose the ellipsis, then Fork to create the fork.

Note You must have the Create Repository permission in your chosen project to create a fork. We recommend you create a dedicated project for forks where all contributors have the Create Repository permission. For an example of granting this permission, see Set Git repository permissions.