Aubai is an AI chatbot assistant which is design to answer questions realted to company's information and policies.

There are some key components you have to be clear about while building a chatbot like Aubai.

- Large Language model(LLM)

- Conversational Memory

- Prompt Engineering

- Function Calling

- Vector Embeddings

- Vector Database

There are many different LLMs avilable in the market which are fine-tune for generating chat like responses. among them one of the best models are openai's GPT-3.5 Turbo and GPT-4-Turbo (current). There are multiple reasons to select these models. These are the biggest models available in the current market which can be easlily accessable by APIs, Hosted by openai and apart from generating chat like responses they also provide out of the box functionlties.

context window is the maximum amount of text the model can consider at any one time when generating a response. memory of any LLM based system is also gets affected by the size of context window, you will get the idea when you will read memory concept. The context window is very large for GPT models. for example, GPT-3.5-Turbo has 4,096 tokens where GPT-4-Turbo has 128,000 tokens.

Tokens can be viewed as fragments of words. When the API deals with prompts, it breaks down the input into tokens. These tokens may not exactly match where words start or end; they can have spaces and parts of sub-words. To know how to count tokens please refer https://platform.openai.com/tokenizer. Size of context window is very important when you want a long continious interaction with LLM in your usecase.

Conversational memory helps a LLM based chatbot respond like it's having a real conversation. It lets the chatbot remember what was said before, so each question isn't treated separately, and it considers past talks to give better replies. from the programming percpctive developer have to somehow keep the history of the interaction going on with the LLM and feed it with each next input. without it, every query would be treated as an entirely independent input without considering past interactions. Example of conversational memory using aubai's brain class.

from brain import Brain

# Example conversation history

conversation_history = [

{"role": "system", "content": "LeaveBot"},

{"role": "user", "content": "What is the leave policy of the company?"},

]

# Instantiate the Brain

leave_bot = Brain()

# User interacts with the chatbot

user_query_1 = "How many leave days am I entitled to per year?"

user_query_2 = "Can I take a leave for more than 10 days consecutively?"

# Initial interaction

full_response_1 = " ".join(response for response in leave_bot.llm_call(chain=conversation_history, prompt=user_query_1))

print("Full Response 1:", full_response_1)

# Now, let's extend the conversation

conversation_history.append({"role": "assistant", "content": "You are entitled to 15 leave days per year."})

# User's next query

full_response_2 = " ".join(response for response in leave_bot.llm_call(chain=conversation_history, prompt=user_query_2))

print("Full Response 2:", full_response_2)

Checkout the implemenation for more details githublink: https://github.com/gehlotabhishek/aubai/blob/aubai-dev/code/brain.py

Prompt engineering is a fresh field that focuses on creating and fine-tuning prompts to make language models (LMs) more efficient across various applications and research areas. Having prompt engineering skills helps in understanding what large language models (LLMs) can and cannot do.

At its core it is about arranging text in a way that an AI model can understand what a user wants to accomplish. A prompt is the task described in plain language that the AI needs to do. prompt engineering is must in order to enhance the performance of LLMs in tasks like question answering and Chatbots. Developers leverage prompt engineering to craft reliable techniques for effective communication with LLMs and other tools.

One example will be Chain of thought,

Prompt:

The odd numbers in this group add up to an even number: 4, 8, 9, 15, 12, 2, 1.

A: Adding all the odd numbers (9, 15, 1) gives 25. The answer is False.

The odd numbers in this group add up to an even number: 17, 10, 19, 4, 8, 12, 24.

A: Adding all the odd numbers (17, 19) gives 36. The answer is True.

The odd numbers in this group add up to an even number: 16, 11, 14, 4, 8, 13, 24.

A: Adding all the odd numbers (11, 13) gives 24. The answer is True.

The odd numbers in this group add up to an even number: 17, 9, 10, 12, 13, 4, 2.

A: Adding all the odd numbers (17, 9, 13) gives 39. The answer is False.

The odd numbers in this group add up to an even number: 15, 32, 5, 13, 82, 7, 1.

A:

Output:

Adding all the odd numbers (15, 5, 13, 7, 1) gives 41. The answer is False.

You can perform the above similarly with Zero-shot COT Prompting, Prompt:

Note: 'Let's Think step by step' <---- add this special prompt.

Question: A juggler can juggle 16 balls. Half of the balls are golf balls, and half of the golf balls are blue. How many blue golf balls are there?

Answer:

Output:

There are 16 balls in total. Half of the balls are golf balls. That means that there are 8 golf balls. Half of the golf balls are blue. That means that there are 4 blue golf balls.

In order to complete tasks LLM needs to understand the details of the tasks as much as possible and simply by just asking in a one go may not solve the problem at this time different prompting techniques comes into the picture. where adding some extra details realted to the task or by passing a specific prompt which tells the LLM to think differently for example, a vastly use method is chain of thought technique. To implement the COT add this prompt 'Think step by step' in your query. LLM will solve the given task in a step by step manner. To understand different prompting techniques please refer: https://www.promptingguide.ai/

Function calling is a very powerful approach to connect LLM with external digital world. In an API request, you can specify functions, and the model will smartly create a JSON object with the necessary arguments for calling one or more functions. The openai's API doesn't execute the function; instead, it provides JSON that you can use in your code to make the function call.

sequenceDiagram

User ->> Aubai: Can I take 13 days leave in one go?

Aubai-->>Aubai: Analysed the query, need to call a function to get the information related to leave policy.

Aubai ->> ChromaDB Query Engine: query: can a employee take leaves more than 10 days?

ChromaDB Query Engine ->> Vector Database: Get a list of related documents which are most similar and near to the query in vector space

Vector Database ->> Aubai: <list of related documents which are most similar and near to the query in vector space>

Aubai -->> Aubai: Curate the answer based on the user's query and database information

Aubai ->> User: You cannot take 13 days leave in one go.

checkout the Function calling implementation on Aubai github: https://github.com/gehlotabhishek/aubai/tree/aubai-dev

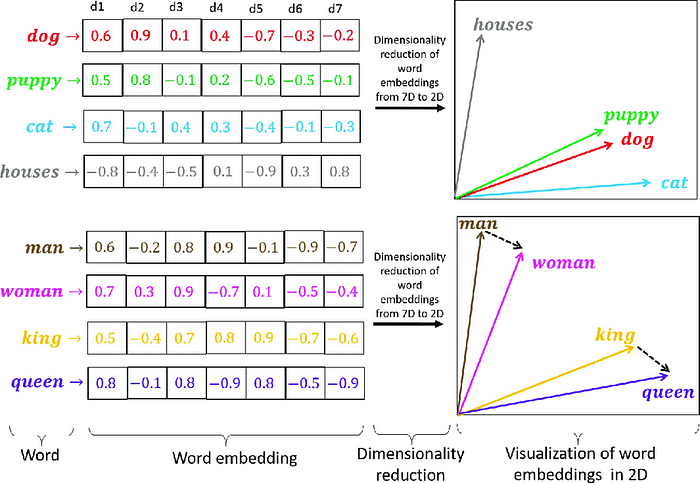

Vector embeddings, in terms of Large Language Models (LLMs), are numerical representations of words, phrases, or even entire documents in a multi-dimensional space. These embeddings capture the semantic meaning of the text, allowing the LLM to understand and process language. Each point in the space represents a different word or text snippet, and the distance or angle between points reflects the similarity between their meanings. Embeddings enable the LLM to perform tasks such as classification, translation, and question answering more accurately.

There are different models availabe to generate vector embeddings from the text. Aubai uses SentenceTransformers for word to vector embeddings.

A vector database is a type of database that indexes and stores vector embeddings for fast retrieval and similarity search, with capabilities like CRUD operations, metadata filtering, and horizontal scaling. In Aubai we are using ChromaDB which is an open source vector database. Link: https://www.trychroma.com/

Before embedding the text data, data should be divded into batch of chunks. The chunking is highly dependend on the use case, in our usecase we have to store the policy documents. Let's see the approach how we take the approach of dividing the policy document, converting it into vector embeddings and store it into our chromadb vector database.

In loading stage we have a function name data_loader() which loads a pdf and do the parsing and chunking.

import PyPDF2

import os

def data_loader():

data_dir = 'data/policy'

if not os.path.exists(data_dir) or not os.path.isdir(data_dir):

print("The 'data' directory does not exist in the current working directory.")

return

all_data = []

for file in os.listdir(data_dir):

if file.endswith(".pdf"):

file_name = file.split(".")[0]

pdf_url = os.path.join(data_dir, file)

pdf_file = open(pdf_url, 'rb')

pdf_reader = PyPDF2.PdfReader(pdf_file)

for page_num in range(len(pdf_reader.pages)):

page = pdf_reader.pages[page_num]

page_text = page.extract_text()

chunk = " ".join(page_text.replace(" ", "").split('\n'))

# all_data.append([file.split(".")[0], chunk])

all_data.append({"topic": file_name, "content": chunk,

"page_number": f"{file_name}_{page_num + 1}"})

pdf_file.close()

collection_name = config('COMPANY_POLICY_DATA')

chroma_client_host = config('CHROMA_CLIENT_HOST')

chroma_client = chromadb.HttpClient(host=chroma_client_host, port=8000)

try:

chroma_client.delete_collection(name=collection_name)

except:

pass

distance_functions = ["l2","ip","cosine"]

sentence_transformer_ef = embedding_functions.SentenceTransformerEmbeddingFunction(model_name="multi-qa-mpnet-base-dot-v1")

collection = chroma_client.create_collection(name=collection_name,embedding_function=sentence_transformer_ef, metadata={"hnsw:space": distance_functions[0]})

documents = []

metadata = []

ids = []

for data in all_data:

documents.append(data['content'])

metadata.append({"topic": data['topic']})

ids.append(data['page_number'])

collection.add(documents=documents, metadatas=metadata, ids=ids)

Full code:

def data_loader():

data_dir = 'data/policy'

if not os.path.exists(data_dir) or not os.path.isdir(data_dir):

print("The 'data' directory does not exist in the current working directory.")

return

all_data = []

for file in os.listdir(data_dir):

if file.endswith(".pdf"):

file_name = file.split(".")[0]

pdf_url = os.path.join(data_dir, file)

pdf_file = open(pdf_url, 'rb')

pdf_reader = PyPDF2.PdfReader(pdf_file)

for page_num in range(len(pdf_reader.pages)):

page = pdf_reader.pages[page_num]

page_text = page.extract_text()

chunk = " ".join(page_text.replace(" ", "").split('\n'))

# all_data.append([file.split(".")[0], chunk])

all_data.append({"topic": file_name, "content": chunk,

"page_number": f"{file_name}_{page_num + 1}"})

pdf_file.close()

collection_name = config('COMPANY_POLICY_DATA')

chroma_client_host = config('CHROMA_CLIENT_HOST')

chroma_client = chromadb.HttpClient(host=chroma_client_host, port=8000)

try:

chroma_client.delete_collection(name=collection_name)

except:

pass

distance_functions = ["l2","ip","cosine"]

sentence_transformer_ef = embedding_functions.SentenceTransformerEmbeddingFunction(model_name="multi-qa-mpnet-base-dot-v1")

collection = chroma_client.create_collection(name=collection_name,embedding_function=sentence_transformer_ef, metadata={"hnsw:space": distance_functions[0]})

documents = []

metadata = []

ids = []

for data in all_data:

documents.append(data['content'])

metadata.append({"topic": data['topic']})

ids.append(data['page_number'])

collection.add(

documents=documents,

metadatas=metadata,

ids=ids

)

return True

data_loader()